-

Posts

1,434 -

Joined

-

Last visited

Content Type

Forums

Status Updates

Blogs

Events

Gallery

Downloads

Store Home

Posts posted by ScratchCat

-

-

Are you able to reproduce the behavior on a Linux Live USB to determine if the problem is software or hardware related?

-

$124 for a 500GB SSD is extremely high. You can get a 500GB 980 (non-Pro) for half that price.

- Morris_lee_9116 and Spotty

-

2

2

-

16 hours ago, Moonzy said:

dont believe me? try using HDD only, and 1Mbit internet

There is a difference between using modern programs on a hard drive and programs written when practically all computers used a hard drive. Windows XP is reasonably smooth even on a two decade old laptop while Windows 10 is practically unusable for a good 15 minutes after start up if installed on a HDD. The control panel on a Celeron M is more responsive than the settings app on a R7 4800U.

The same goes for websites. Have a look at some simple, javascript free webpages and try open them on old devices. It won’t be as instant as on newer hardware but it isn’t slow by any means.

-

-

Summary

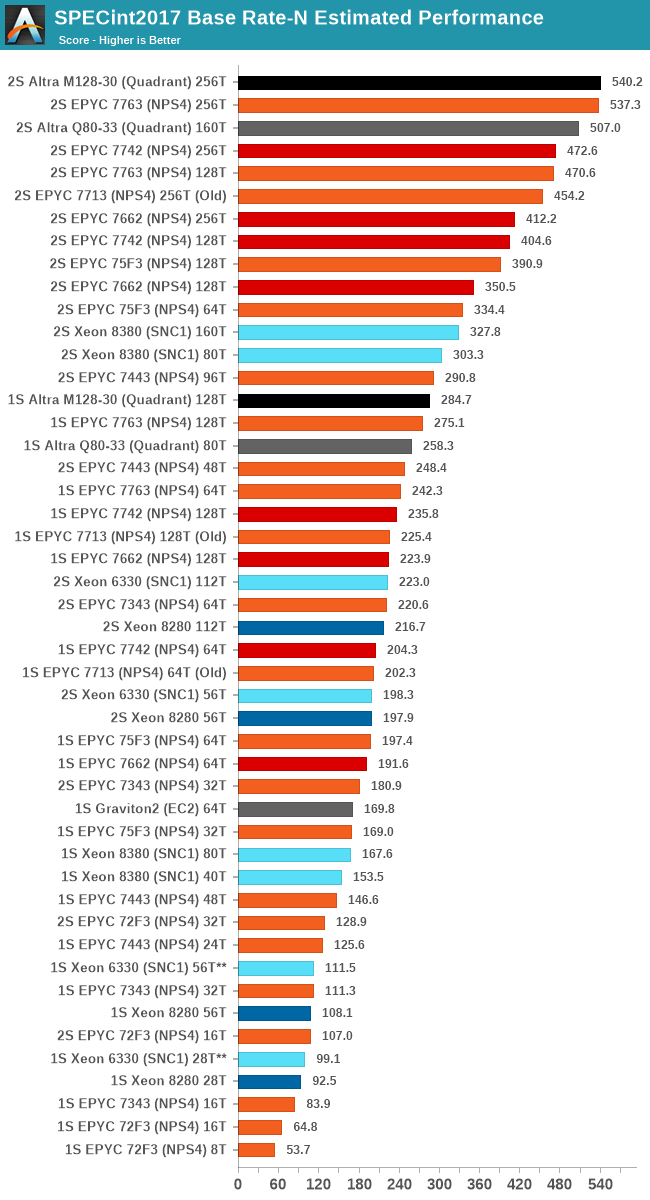

Ampere has released their new ARM based CPU lineup sporting up to 128 cores on a single die on TSMC's 7nm node. Ampere's first generation Q80-33 had up to 80 of Arm's Neoverse-N1 cores clocked at 3.3 GHz along with 32MB of L3. The M128-30 adds 60% more cores but clocks 300MHz lower at 3GHz and halves the L3 cache to 16MB.

Inter-socket communication has improved drastically over the previous generation, reducing latency by a third.

QuoteThe results are that socket-to-socket core latencies have gone down from ~350ns to ~240ns, and the aforementioned core-to-core within a socket with a remote cache line from ~650ns to ~450ns – still pretty bad, but undoubtedly a large improvement.

Workloads without large memory bandwidth needs see good scaling with the extra cores despite the lower clockspeed.

QuoteWorkloads such as 525.x264_r, 531.deepsjeng_r, 541.leela_r, and 548.exchange2_r, have one large commonality about them, and that is that they’re not very memory bandwidth hungry, and are able to keep most of their working sets within the caches. For the Altra Max, this means that it’s seeing performance increases from 38% to 45% - massive upgrades compared to the already impressive Q80-33.

Memory bandwidth hungry workloads are held back by the tiny 16MB cache (Epyc has 256MB for 64 cores) with some applications performing worse than on an equally clocked Q80-33.

Quote505.mcf_r is the worst-case scenario, although memory latency sensitive, it also has somewhat higher bandwidth that can saturate a system at higher instance count, and adding cores here, due to the bandwidth curve of the system, has a negative impact on performance as the memory subsystem becomes more and more inefficient. The same workload with only 32 or 64 instances scores 83.71 or 101.82 respectively, much higher than what we’re seeing with 128 cores.

Quote

QuoteIn the FP suite, we’re seeing a same differentiation between the M128-80 and the other systems. In anything that is more stressful on the memory subsystem, the new Mystique chip doesn’t do well at all, and most times regresses over the Q80-33.

In anything that’s simply execution bound, throwing in more execution power at the problem through more cores of course sees massive improvements. In many of these cases, the M128-30 can now claim a rather commanding lead over the competition Milan chip, and leaving even Intel’s new Ice Lake-SP in the dust due to the sheer core count and efficiency advantage.

Single thread performance actually regresses relative to the Q80-33 due to the combination of lower clockspeed and half the L3

Overall the new chip isn't clearly better than the previous generation, providing massive improvements in some workloads but kneecapping performance in anything which requires more cache per core.

QuoteThe Altra Max is a lot more dual-faced than other chips on the market. On one hand, the increase of core count to 128 cores in some cases ends up with massive performance gains that are able to leave the competition in the dust. In some cases, the M128-30 outperforms the EPYC 7763 by 45 to 88% in edge cases, let’s not mention Intel’s solutions.

On the other hand, in some workloads, the 128 cores of the M128 don’t help at all, and actually using them can result in a performance degradation compared to the Q80-33, and also notable slower than the EPYC competition.

My thoughts

The M128-30 seems quite impressive on paper, 128 N1 cores at 3 GHz with a TDP of 250W. Single thread performance wasn't terrible on the Q80-30 so this should have been a real threat to Epyc. However the 16MB L3 drags down performance in memory intensive workloads, sometimes below the performance of the older Q80-33. Compute heavy and cost sensitive workloads are the likely target here with two chips costing 25% less than Epyc CPUs with a similar level of performance.

Source

https://www.anandtech.com/show/16979/the-ampere-altra-max-review-pushing-it-to-128-cores-per-socket

-

Try disable BD PROCHOT in ThrottleStop, some laptop manufacturers force the CPU to throttle when a non OEM power supply is detected (or the power supply dectection fails).

Source (for a Dell laptop, perhaps Lenovo has a similar thing): https://superuser.com/questions/1160735/stop-dell-from-throttling-cpu-with-power-adapter

-

3 hours ago, leadeater said:

https://www.anandtech.com/show/16315/the-ampere-altra-review

This is really just a product refresh to offer more cores, the above is a review of the 80 core 250W CPU with the same ARM microarchitecture so it's a rather good indicator of performance. Since the reviewed 80 core CPU is also 250W you should only expect a more moderate improvement, 60% more cores with probably like ~30% more performance.

The 80 core version has a significant amount of power headroom in most workloads, averaging around 200W. 128 cores would put it over the 250W TDP but it looks like aiming for a slightly less aggressive frequency would keep power in check.

QuoteIn terms of power-efficiency, the Q80-33 really operates at the far end of the frequency/voltage curves at 3.3GHz. While the TDP of 250W really isn’t comparable to the figures of AMD and Intel are publishing, as average power consumption of the Altra in many workloads is well below that figure – ranging from 180 to 220W – let’s say a 200W median across a variety of workloads, with few workloads actually hitting that peak 250W.

The main problem I foresee is the tiny 16MB L3. That's half of the L3 the 80 core version had and it already was becoming cache starved in certain scenarios.

QuoteThere are still workloads in which the Altra doesn’t do as well – anything that puts higher cache pressure on the cores will heavily favours the EPYC as while 1MB per core L2 is nice to have, 32MB of L3 shared amongst 80 cores isn’t very much cache to go around.

-

1 minute ago, Nathanpete said:

At this point I'm willing to consider that maybe that software does have a bug with storage units because such an old drive, even if it was SLC, should have died at less than 500GB.

According to the spec sheet for the drive the drive is only rated for 72TBW so either the counter is broken (which somewhat invalidates the rest of the SMART) or it's one very lucky sample. For reference a 256G MLC Samsung 840 Pro died after 2.2PB written.

-

2 minutes ago, Nathanpete said:

You do realize that while the SSD does track reads, reading does absolutely zero damage to the drive. You can read the drive for fucking forever. It is writes that hurt the NAND flash inside. That is why SSDs have both a warranty void after a time period and after a certain TBW or Terabytes written because at that point it is likely the SSD is very damaged by just plain usage, and the manufacturer shouldn't be responsible for that.

It also has had >1PB of writes which is insane for a 128GB drive.

-

2 hours ago, WolframaticAlpha said:

I'd love to see the reactions of the people who were trashing on x86 for having vulnerabilities.

This is a much less dangerous vulnerability than Spectre or Meltdown. It requires two processes which want to communicate with each other via a covert channel. Meltdown and Spectre on the other hand allow the attacker to read the memory of a (possibly privileged) process which doesn't want to communicate with the attacker.

-

2 hours ago, WolframaticAlpha said:

I may sound naive, but what is the problem in data collection?Do you want randm crap on the internet or stuff that you actually care about? Also the whole privacy talk is redundant, cuz a megacorp like fb/amzn or msft aren't going to lose your data. The main driving factor behind privacy was the chance of leaked data, and imo it is much better that facebook or msft store nd collect data than disk-cord.

Remember Cambridge Analytica? Where the personal data of 87 million Facebook accounts where scraped? Or when Facebook stored hundreds of millions of passwords in plaintext?

They might be better at protecting it but nothing is completely secure. Doesn't help their only reason for doing so is they can sell the info for more if they are the only ones with access.

-

1 hour ago, Hyrogenes said:

So if ethereum turns into proof of stake how do you get money from it? Just by owning some of it?

The current was something like bitcoin is mined is shuffling around numbers in the block header until is meets some difficulty criteria. For instance you can change the nonce value or include different transactions, both change the hash.

Proof of stake uses your unspent transactions (coins) to generate the block hash instead by hashing parts of the transaction instead. More value in a transaction lowers the difficulty so the more money you have (the bigger your stake) the more likely you will find the block hash.

Since you have a finite number of transactions there are only a small number of attempts you can make, meaning the power consumption is low.

See here for more info: http://earlz.net/view/2017/07/27/1904/the-missing-explanation-of-proof-of-stake-version

-

4 minutes ago, Duhanz said:

When it it is not powered and you spin by hand it stops really quick...and i got also 1050 ti and its fan spins 3x longer!

The way the fans are designed means they stop quite quickly.

The 1050 Ti is a more power hungry card, it's normal for it to spin faster than a 730. If temperatures aren't a problem don't worry about it.

-

What card are you using?

It shouldn't affect the lifetime of the fan heavily. Servers operate with fan speeds near the maximum constantly and you don't hear about those breaking all the time.

-

1 hour ago, ragnarok0273 said:

What is with companies and "X" things?

5600X CPU.X17 mouse.

X65 modem.

X99 chipset.

3D Xpoint.

Has the Intel way of X = better leaked out into the world?

-

As long as it’s not conductive or interfering with any spinning fans it will be fine.

-

20 minutes ago, ComputerBuilder said:

i did that like 10 times in a row in matter of fact, but ill do again

If you haven’t used it in a while just check the sources are for Debian buster and not some older version. You can check by reading /etc/apt/sources.list.d/raspi.list (or similar files).

-

2 hours ago, papajo said:

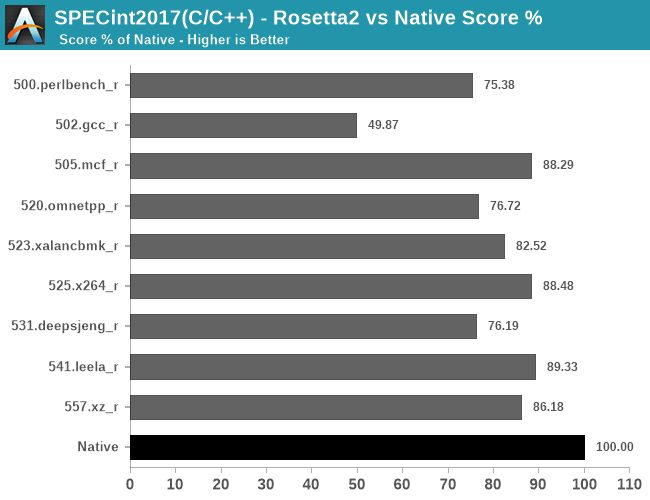

That's not the case and surely some marketing sugarquoting what rosetta 2 does is emulation essentially it translates x86 instructions to ARM instructions.

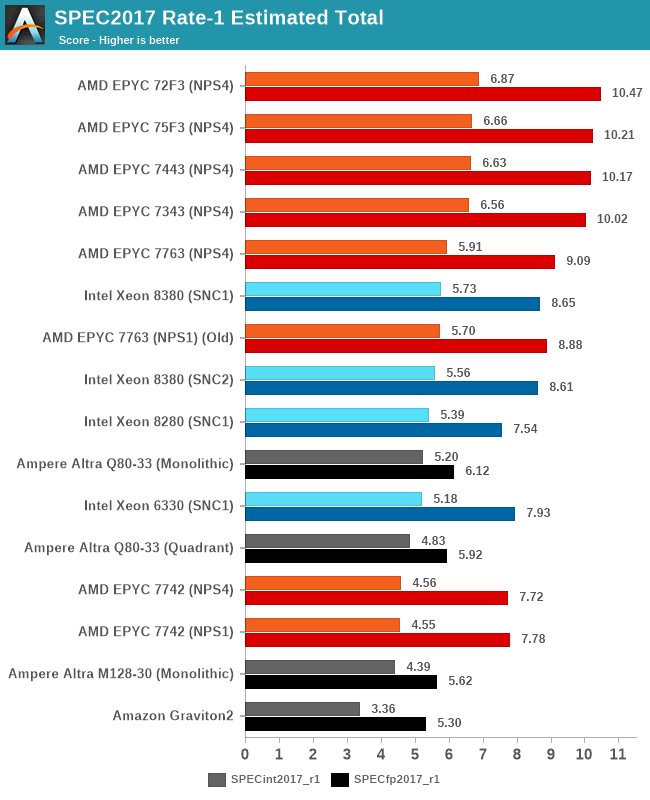

Regardless of how it is done, 70-80% of native performance is nothing to be sneered at. In fact, because of the performance jump it can be faster to run Rosetta programs on the M1 over the x86 chip used before.

2 hours ago, papajo said:

2 hours ago, papajo said:It has 4 cores with higher boost frequency and 4 cores with lower boost frequency that's how all arm CPUs are marketed = total core count and it is not the first time this is implemented.

The M1 Firestorm and Icestorm cores are completely different. This isn't some low end SoC using two sets of 4 A53s for "8 core marketing purposes". The smaller Icestorm cores are already almost as performant as older A72/73 designs.

2 hours ago, papajo said:An ARM chip can never compete against a x86 chip when power consumption and/or thermal management is not an issue.

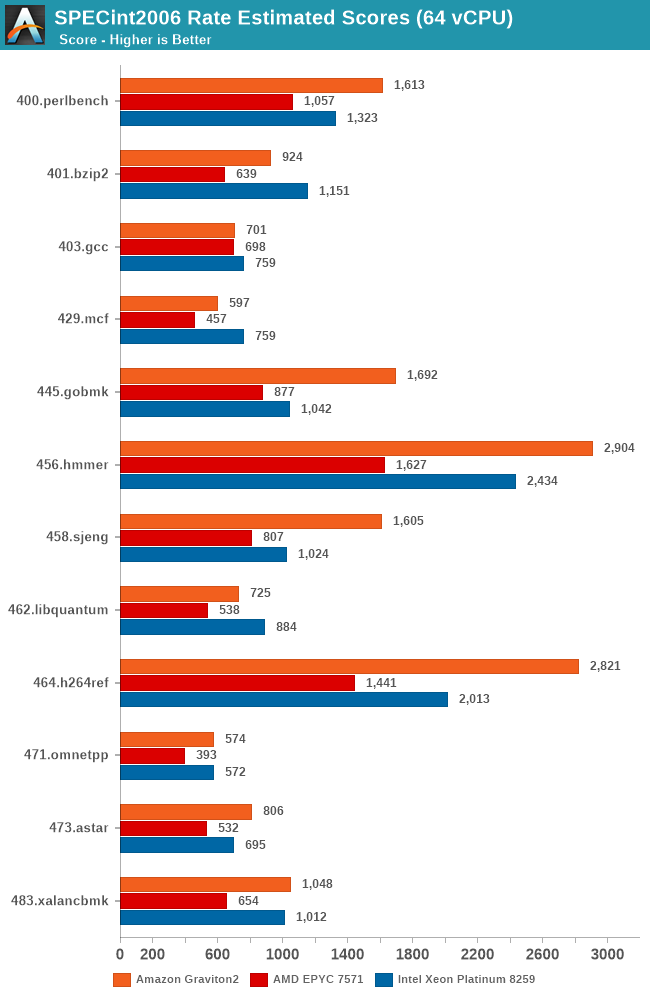

Amazon's Graviton2 (full fat 64 core ARM server processor) would like a word with you:

-

1 hour ago, Sauron said:

Other than that the GPIO layout is not exactly the same as an arduino nano so depending on which you want to use you'll have to adapt your code accordingly.

Since this is partly a micro controller silicon launch (RP2040) with a bunch 3rd party models Arduino will be launching their own copy with this silicon. I wouldn't be surprised if their model has an Arduino compatible pinout.

QuoteBut there’s more! We are going to port the Arduino core to this new architecture in order to enable everyone to use the RP2040 chip with the Arduino ecosystem (IDE, command line tool, and thousands of libraries). Although the RP2040 chip is fresh from the plant, our team is already working on the porting effort… stay tuned.

QuoteArduino Nano RP2040 Connect

Arduino joins the RP2040 family with one of its most popular formats: the Arduino Nano. The Arduino Nano RP2040 Connect combines the power of RP2040 with high-quality MEMS sensors (a 9-axis IMU and microphone), a highly efficient power section, a powerful WiFi/Bluetooth module, and the ECC608 crypto chip, enabling anybody to create secure IoT applications with this new microcontroller. The Arduino Nano RP2040 Connect will be available for pre-order in the next few weeks.

-

1 hour ago, Kilrah said:

That won't work since he has a drive array, he needs most of them online at the same time.

Theoretically he could image the drives one by one and mount the images. However that requires enough space for the minimum number of drive images as well as space to move the files to a new pool.

-

On 1/12/2021 at 1:38 PM, Spindel said:

Why use a screenprotector at all?

I’ve never had it or a case and have never broken a smartphone (bought my first smartphone in 2007). Over time I have gotten some light scratches on the screen, but never anything that is distracting and never anything worse than you get on a plastic film (which is distracting).

Accidents happen and the glass screen protectors offer almost the same feel as the original glass. Plus the resale value should be higher if the display is pristine.

-

On 1/12/2021 at 6:56 AM, dizmo said:

TL;DR - They're not going to take the resources to do this.

It wouldn’t take much effort on their side since they can just quote iFixit.

With batteries being considered disposable items, fragile glass on both sides and phones lasting longer and longer I think it’s fair to factor in the cost of repair. -

If you want to spawn in that many blocks you should use a plugin which doesn’t block the main server thread.

Something like WorldEdit with the async mode should work nicely. A raspberry pi 3 can spawn millions of blocks using that.

-

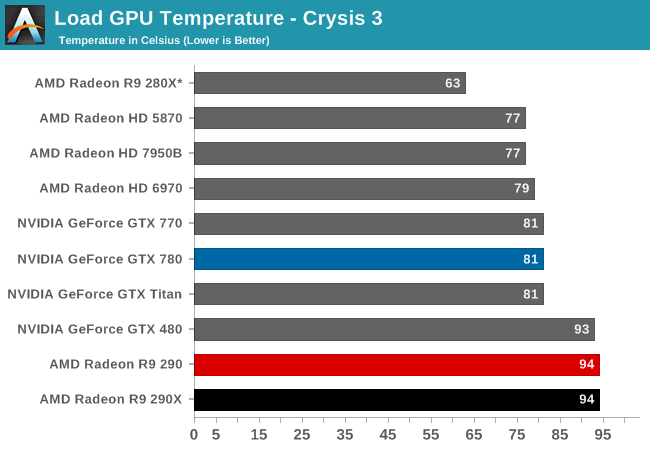

For a reference/blower design R9 290 90+C is completely normal, they ran extremely hot and sounded like a jet engine.

However the temperature doesn't seem to affect the cards longevity as AMD rated it for that temperature and my R9 290 is still working 6 years later. I would recommend you reduce the power target and underclock it for general use, -20% for both keeps it in the mid 80s without shredding your ears.

QuoteOur Crysis 3 noise chart is something that words almost don’t do justice for. It’s something that needs to be looked at and allowed to sink in for a moment.

With the 290 AMD has thrown out any kind of reasonable noise parameters, instead choosing to chase performance and price above everything else. As a result at 57.2dB the 290 is the loudest single-GPU card in our current collection of results. It’s louder than 290X (a card that was already at the limit for reasonable), it’s louder than the 7970 Boost (an odd card that operated at too high a voltage and thankfully never saw a retail reference release), and it’s 2.5dB louder than the GTX 480, the benchmark for loud cards. Even GTX 700 series SLI setups aren’t this loud, and that’s a pair of cards.

- FangerZero and Frandesktop

-

2

2

.thumb.jpg.24da69eef964f5222d77b6d665da8858.jpg)

Random keyboard inputs from windows 10.(I tried about everything)

in Troubleshooting

Posted

Lots of Linux distributions let you boot from a USB stick or CD so you can test everything out before you install.

All you need to do is download Ubuntu or some other distribution, write the file to a USB stick using a tool like Rufus. You can then boot from the USB stick.