Search the Community

Showing results for tags 'iscsi'.

-

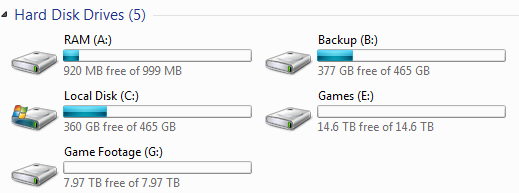

Hi all. So the purpose of this post. I am soon going to be upgrading one of my servers. The is a truenas core virtual machine running. I intend to install true scale instead with: 5 x 4TB hard drives in raidz1, with 2 x 16GB intel optane for deduplication. I will setup two ISCSI devices connecting 12TB (maybe more) sparse drives. One is to connect an ISCSI device to a windows virtual machine. The drive will have all my games copied over. Its only job will be to update games. A proxmox lxc container in my smaller server has a lancache server. Details of it can be found here: https://lancache.net/ The lancache is software can be installed on I think any linux OS. It is typically used for large gaming lan parties, but I use on a much smaller scale. My main PC will connect to the second ISCSI, running & playing games off that. Gaming would be sluggish doing that alone. I have a PCIE 3.0 adapter and will be adding another nvme as a cache drive. I use primocache software. I have used it for a while and its works well. It is suited to block storage, such as caching a hard drive, or for me an ISCSI share. The idea is: Games will be updated in the background through the windows virtual machine on the server When updating, will probably be cached, so download much faster from lancache server. Writes to the server will also be fast, as the deduplication means little actual writes to the drives as the same data is stored already. The bottleneck if any, will be the network, which is 2.5Gb. I want to get a 500 - 512 GB NVME PCIE 3.0 drive for a cache. Which one to get though? There are some fairly cheap ones. It will be hit fairly hard, so my thoughts are a decent quality one with DRAM & SLC caching. If I go super cheap, it may not last long. Also I do not want to spend a lot. Maybe a long warranty is an indicator? Suitable suggestions are appreciated. Thanks

-

- primocache

- nvme

-

(and 4 more)

Tagged with:

-

For the uninitiated, what is PXE/iPXE Network Boot? PXE/iPXE or Preboot eXecution Environment is a feature included with many makes and model of network adapter used in the boot process of a host from a network resource. Unlike traditional methods of booting a computer where-in you boot from a HDD/SSD, CD, or USB device PXE/iPXE enables your computer to reach out to a server on the network hosting the appropriate services to boot from. This can be a server hosting services such as TFTP, iSCSI, NFS, or even FCoE. NOTE: At this time this tutorial only supports Legacy Boot. UEFI Boot has not successfully been tested and therefore has been excluded for the time being. Index 1. Hardware Requirements 2. Preparing Installation Media 3. Prepping the Server Operating System 4. Installing & Modifying the Client Operating System So what should happen now if you have PXE/iPXE set as your primary boot device is: PXE/iPXE queries the FreeBSD DHCP service for it's assigned IP address meanwhile collecting the IP & Filename for TFTP. PXE/iPXE queries the FreeBSD TFTP service for the file. Chainloads (downloads) it, and boots it. The now updated iPXE image runs the special embedded script we wrote and: Queries FreeBSD DHCP for it's assigned IP again. Sets the initiator-iqn. Makes a sanboot request to the initiator-iqn. This starts loading GRUB (the bootloader). GRUB queries the FreeBSD iSCSI service based on how we edited GRUB_CMDLINE_LINUX_DEFAULT. As the OS starts to load it starts the iscsi module and queries the FreeBSD iSCSI service based on how we edited /etc/iscsi/initiatorname.iscsi. And finally after this process finishes the system wraps up what other data it needs to load into memory from disk. We have a successful SAN booted PC! There is no GUI but one can be installed. Comment below if you're interested in that. Otherwise enjoy!

-

This is a project I've been meaning to get off the ground for quite some time. Given my work schedule I only predict I'll be able to work on it and post updates once a week so to all who chose to follow along I ask for your patience. For those clicking because they're not familiar with the SAN & iPXE terminologies: This is a special network project I've been planning and have had in the works for a number of months. Finally I have everything I need to get it started and I figured some of you might like to follow along. I plan to write a full tutorial based on what ends up working here if you'd like to try building something like this yourself. The focus of this build log is to setup a small series of servers so that they all boot off of a network resource. The reasons for doing this are: Ease of Repairability Reduce Downtime Ease of Scalability Overall it's very cool to me and I want to play with it. :3 The build log is going to consist of four main stages: Preparing the network hardware (assembling, configuring, firmware updates) Setting up our hypervisor server (installing NICs, configuring IP's, preparing Virtual Machines) Configuring the hosting servers (iSCSI & DHCP servers) Setting up the client servers (establishing iSCSI connections, installing OS's from scratch) In the end these client servers will act as nodes and with this network additional nodes will be added in the future. For the time being this is the network hardware we'll be working with: 2x PCIe-10G-SFP+ made by TG-NET based on the BCM57810S controller. These will be installed in the hypervisor server and will be in charge of hosting the virtual disks our nodes will boot from. 3x 10Gbe SFP+ Mellanox ConnectX-2 MNPA19-XTR network cards These 10 Gigabit NIC's are very old but very cool for networking aficionados, did I mention they're cheap? 14x FiberStore 10G SFP+ 850nm 300m transceivers. These are cheap SFP+ modules that convert the electrical signals that the NIC puts out and converts them to laser light signals that we can hook-up our fiber patch cables to. 7x OFNR LC/UPC-LC/UPC 50/125 OM4 Multimode Fiber-optic patch cables These are a inexpensive glass/ceramic composite fiber optic patch cables. They're easy to get and are good for short runs. I've only tested them for 10Gig up to 50ft but I'm sure you can use them for longer runs. Tonight is just an introduction. I will try to get something started tomorrow. I'm excited to get this underway and to try and overcome the hurdles I'll inevitably have to cross.

- 34 replies

-

- san

- fiber-optics

-

(and 3 more)

Tagged with:

-

I've long wanted to experiment with using a virtual machine to run a ZFS raid and export that ZFS raid as an iSCSI block device to the host. It was just an idea, but with the new system I built it became a necessity. I built a triple use workstation for gaming, DAW and software development. I used two Windows 10 Pro boot partitions for gaming and the other for the DAW. The dev partition is Ubuntu 20.04. The problem? You can't share raid storage between Windows and Linux, except maybe if you dedicate a raid controller with drivers for both. Those are expensive, especially when they involve 4xNVME drives. I also have 2 x 6TB Seagate Ironwolf drives (nearline backups) and 3 x 2TB Samsung 970 Evo drives (mid speed storage). I use all this storage for my Windows Daw and for my software engineering work. I really needed to raid it all between two different operating systems. So, I tried my idea. For the test I used a pair of the Evos. One of the Evos is actually dedicated as a boot solution for the three OS partitions. I still haven't found a cross-platform way to BOOT raid from the same drives so one of the Evo is devoted to that. The Ubuntu VM config: Hyper-V with Ubuntu 20.04 2 cpus 3 GB Ram 1 Virtual Nic 2 x Samsung 970 Evos with 2 TB capacity ZFS "raid 0" Open-scsi target on a block device that used 80% of the raid pair. The Windows 10 Pro Host config: x3950 64 Gigs Ram Microsoft iSCSI Initiator NTFS formatting of the attached iSCSI device I actually tried a lot of different configurations of virtual cpus and ram. Anything over 2 cpus performed worse. Anything over 3 gigs of ram performed worse. The worst performances was 32 cpus and 32 gigs of ram. Well, when you see the results in the attached capture of ATTO, you'll see that it isn't possible to go read FASTER than the iSCSI solution as I slammed up against SATA transfer rates. Also 1 cpus and 1 gig of memory was pretty good, only about 10% less performant that 2 cpus and 3 gigs. Unfortunately, writes are not quite up to snuff, I think that somehow I was only able to write to the drives one at a time. In task manager I would see the two drives oscillate with the writes. This is more of proof of concept than a real benchmark. Maybe someone will want to run with this and do a better benchmark? I haven't tried the Ubuntu side yet, but I did a really simple POC of the whole solution with an attached USB drive and Ubuntu could read the NTFS partition on the ZFS block device just fine. I'm curious to see if its faster or slower than the iscis over virtual nic. I may also do this with the 4 x NVME but right now I have a coding project I don't want to disrupt with backing up the NVME dynamic disk raid and restoring it with ZFS over iSCSI. Images attached are the ATTO benchmark and one of the time times when I caught the Evo bumping up against SATA limits during the run of the benchmarks.

-

Hi, I have configured a Tiny PXE server for booting Windows 10 from local network (NOT the installer, the actual Windows) with iSCSI, and everything worked as expected (i followed a YouTube guide), however i don't want to use a generic ISO downloaded from a Website suggested in the video (but for a first test I used it just to see if it would have worked), because i don't trust it so much. So I procedeed installing Windows from my ISO into a Hyper-V Virtual Machine, and exported the VHDX then I copied it into the iSCSI virtual disks folder and tried to boot, however Windows tries to Boot then fails with a BSOD and error: "INACCESSIBLE_BOOT_DEVICE". i'm not able to figure out why, since the suggested ISO does work, and my ISO successfully installed and booted in the VM, i'm not able to make the client PC boot. Thank You

-

Hi, Is it possible to setup a windows machine (perhaps during windows installation) to only have a ISCSI disk and no physical storage so the machines entire storage (including the OS) is stored on the NAS device?

-

I just watched this video on using iSCSI for Steam and feel like it's missing a ton of content that would help me better understand how it works and possible use cases: I understand the concept, but as soon as I try to implement it, I think I'll run into some major snags. I already have plenty of storage on my PCs as it is, but having dedicated storage just for my Steam library would be nice; although, I'd need to upgrade my machines from 2.5Gb to 10Gb Ethernet which costs more than buying larger SSDs. Right now, I'm trying to figure out use cases: I don't believe this is compatible with DirectStorage, but maybe it is if I use only SSDs in TrueNAS? Not sure what Windows looks for in terms of DirectStorage compatibility. Since this formats as NTFS on top of ZFS, is there a problem with snapshots or other ZFS utilities? This sounds a lot like LVM where you're creating a virtual filesystem on top of a real one; almost like a virtual machine. Can that iSCSI NTFS share be read by TrueNAS, or is it something only that one Windows machine can read? I'm wondering what happens if I want to modify that data from another PC or if I reformat Windows. Is there a reason why I wouldn't use this for file sharing drives? Is it because each computer has to have its own iSCSI filesystem? Network drives are for multi-machine but iSCSI are for single machine?

-

- network drive

- samba

-

(and 2 more)

Tagged with:

-

Hi Guys, Need a bit of help I have been tasked with looking for the correct PCI cards that will work with the below SAN and also what cables i need: San: MSA 2052 iscsi 10GB Need the Card for a ML350 Gen 10 to connect to the 10gb iscsi I have spent hours looking for the relevant card and cable I need to connect this san to 1 server for a client. I know that I some people will say I shouldn't be touching what I don't understand but this is for a client and this is how you learn ;D any help would be a massive help as I need to have somewhat of a proposal ready tomorrow for them. Thanks in advanced David

-

(cross-posted from Superuser, because it's all crickets over there...) I've been toying around with iSCSI for a little while (FreeNAS 11.1-U5 target, Win10 Pro (1803) initiator) and have run into what would be a bit of a deal-breaking issue: I can't seem to disconnect from an iSCSI target, regardless of what I try. All methods get a "The session cannot be logged out since a device on that session is currently being used" message, and fails to disconnect. What I've tried: Clicking Disconnect in the iSCSI Initiator panel Running the equivalent command in PowerShell Taking the disk offline in Disk Management first Disabling the Microsoft iSCSI Initiator device in Device Manager (requires a reboot, not tenable) Turning the iSCSI service off in FreeNAS (this works eventually, but I'd like to not have to do it this way, if I can help it) Per other sources, I've removed all favorite targets and target portals in the iSCSI properties dialog first The 'device' is not currently initiated in Windows, and therefore does not have a file system (so, if I understand correctly, cannot be accessed by the OS in a way that would hold the connection open); it's just a disk device at the moment. My preferred behavior would be to disconnect on request (or at least, successfully force disconnect), so I can then connect from another machine, and then back again when necessary. Thanks in advance for any ideas! Hardware Details: Win10 Pro System (desktop): Ryzen 5 1600X, 16GB RAM, NVMe boot drive, Mellanox ConnectX-2 (direct connection to server) FreeNAS System (server): Core i3-4170, 32GB RAM, 4×10TB in RAIDZ2, Mellanox ConnectX-2 (direct connection to desktop)

-

I'm in the process of moving from a direct-attached array to a 10Gbe Asustor NAS. All was going well until I realized that my cloud backup provider (Backblaze) doesn't support backups from network drives unless you upgrade your subscription to a metered one that would be 10X the cost for my current amount of data (~14TB). After reading about iSCSI and SANs, it seams to me like I could create a local logical drive in Windows using the NAS's volume as an iSCSI LUN and trick the Backblaze uploader into thinking it's a local drive. I'm a little worried about reliability though - does anyone know if there are things I should look out for? The Asustor AS4004T NAS is connected peer-to-peer via an 10GbE NIC on my editing machine. Am I crazy or would this work and potentially save me $50USD/mo on a B2 Cloud subscription?

-

So I picked up a set of Chelsio T62100-SO-CR a while ago in hopes of moving off from the 8Gbps Qlogic FC cards I have and over to 100Gbps FCoE and 100Gbe for connectivity to my FreeNAS box here at the house. Apparently, I did not RTFM hard enough, 100Gbe works out of the box pretty nicely. So here is the question, do I give up on FCoE and try to make the move over to iSCSI boot for my desktop or do I try and get creative with iSER or get my kneepads and ask Chelsio to add FCoE support into the FreeBSD stack they put out? Am I missing something cooler I should be doing?

-

So, I am thinking of setting up a server that contains one 500GB or 1TB SSD that is partitioned into smaller partitions to install OS for multiple computers. My goal is to turn some of computers diskless and to boot from those small partitions instead. I've see setups where there is only one OS image shared across multiple computers and a restart of the computer would mean that all the changes made would not be saved. What I want is: -Windows 10 installation on each of those partitions, but the installations must be different (not shared between all computers) -The changes made while booted via PXE (e.g installing a program), must be saved even after rebooting *Basically means I want to have a full OS in the partition like with a local drive, but instead it is via network instead of local)* The reason why I want to do this is because I have 10 machines running old IDE drives, but they don't need the storage capacity (32GB for each computer is more than enough). I don't want to go out and buy large SSDs or buy a lot of small 32/64GB drives that would be useless in the future. Is this even possible? Thanks for the help!

-

Hello everyone, sorry if this has already been posted, I had a look around and couldn't find anything to fit me. I need two switches to act as HA iSCSI and regular traffic (Separated by vlans). I want a few (at least four) 10gb ports, the rest can be 1gb. It needs to be rack mount and have at least 24 ports. I want the 10gb ports for connecting them together(do I need to do this with a HA setup? If not just two 10gb ports) and for connecting to iSCSI target and backup/NAS. I don't have a set budget but I need it as cheap as possible. If its cheaper to get two gigabit switches and a separate set of 10gb switches I can do that. I don't mind second hand. Power draw isn't an issue. Thanks in advance, The Audtior

-

So, this question is kind of long and technically 2 seperate questions but they relate so I'll keep them together. At the minute I've got 4 systems on my wired network: My desktop which has an i7 4790k in it An IBM x3650 M1 (2x Xeon e5450 36GB Ram) which runs virtual desktops and virtual apps An IBM x3650 M2 (2x Xeon X5650 64GB Ram) which is my main lab server as well as running VMware vCenter And, my main server which runs a router as well as Windows Server 2016 that serves as my NAS and Plex server. This machine is really underpowered with only an AMD a4-6300 dual core and 8GB of ram. I really cheaped out on it but it runs fine. The three servers are all running VMware ESXi 6.5 and are connected to the vCenter Server on the x3650 M2. The main server only has a dual port gigabit card in it which has one port for WAN and the other goes to a 24-Port gigabit switch which has everything else connected to it. So everything is running on a standard 1 gigabit connection to the rest of the network. There is an exception to this. I've got single port 10 gigabit SFP+ cards in both my desktop and my x3650 M2 which have a DAC cable running between them. What I want to do is buy another single port card for my x3650 M1 and 2 dual port cards to put into my main server. I can't do this with the current server as its only got one PCIe slot (it's ITX) which has the dual gigabit card in it. I intend to upgrade my desktop to an i7 8700k in the coming months so the 4790k system will become the main server and the POS a4-6300 will be retired. So i'll be able to do dual 10G cards then. For now what i will do is get the dual 10G cards for the x3650 M2 instead. I intend to run fibre from the two dual port cards, to each of the other systems with a 10G card, so there'll be one central system with 4 SFP+ ports that connect to everything else. With this, what I hope to do is setup a VM on the server that will act as a 10G switch. To the switch VM, I will use PCIe passthrough to give the VM direct access to the NICs and I'll give it a 10G port on the vSwitch as well so the other VMs can communicate with the rest of the network. I want to know if it is possible to setup a VM to act purely as a switch between essentially 5 different network ports. If this is possible with PfSense it'd make life easier cos i can just do it with the one VM instead of having a seperate router and switch. The other part of my question is. When I build my 8700k system, I want to only have 2 NVMe SSDs in it, and no SATA drives at all. My plan is to setup a 3TB iSCSI drive on my NAS and have my desktop connect to that for all my games and user files. I also want to move the drives form the IBM servers to the NAS to run all the VMs from network storage (I might have to buy a SAN disk shelf for this and use a SAS expander but that's not a problem) I know it's technically possible but, is iSCSI and a 10G network actually stable enough to support this? And, how much CPU horsepower will it use (if any). I'm sorry if I've confused you people but I like overly complicated things Thanks for any help.

-

At the studio that I am working at we have set up a FreeNAS server and it has been working great. We use Fiber Channel cards and iSCSI to push to the Machines. When we set this up a few months back we had Two Machines running as clients. Both machines are identical 2010 Mac Pro Towers (souped up quite a bit). We added a 3rd, identical machine with an identical fiber card. This machine does not talk to the server! The machine sees the card, the connections, everything. But the server doesn't seem to "push" the iSCSI drives to it. If we install one of the Fiber cards from the two working machines it mounts right away. We thought it was a bad card, got a few more and we get the same result. The FreeNAS server only pushes to the two original machines. No where can I find this same problem or even how to add the machine to FreeNAS. From my knowledge I don't have to as long as its networked as the other two working machines. We have tried every combination of hardware and software to no avail. We dual boot Windows 10 on these as well and the two "working" cards mount up just fine, no configuration, but any other card does not. There is almost no chance that out of the 6 cards we have now, only two happen to work! Have also tried every combination of the SFPs from the cards too, no change. If anyone has any idea how to fix this or even get us on the track to fixing it, it would be incredibly appreciated. This has been driving us insane! Thank you all very much in advance! *EDIT* The cards are LSI 7204EP-LC 4Gb Cards and the switch is a 4Gb Fiber Channel Switch. The two working machines needed NO configuration to accept the target drives and work no matter the configuration of the switch, even directly connected.

-

Just adding a thread for those that search for it in the future. There's plenty of guides / videos on how to properly bond nics for iSCSI in ESXI, but one thing they all leave out (at least the guides/videos I've gone through) is to enable round robin under multipathing. Given the only device I have with multiple NICs is my ESXI server, thus I'm unable to test from my desktop which made testing difficult. Find your datastore > properties > multipathing, make sure roundrobin is set. By default vmware has it set to "most recently used." http://imgur.com/HRyxOc2 ^Finally getting 2gbit speeds. Sadly took me a whole day to narrow down where the issue was and then what configuration wasn't correct. Also a super useful tool for testing throughput / IOPS etc... is vmware's ioanalyzer.

-

Hi Everyone, I was watching one of the LTT videos and heard that Slick uses an ISCSI drive to install games to over the network so that when he builds a new test bench he doesn't have to reimage the OS to have all of the games for benchmarking. I understand what an ISCSI is and how to set one up. My question is what would be the benefit to installing games on an ISCSI drive vs a portable HDD since you can only write to the ISCSI from one device at a time to avoid corruption? I would be gaming from a couple of computers and want the same instance of all of the games to be accessible from all stations but only from one at a time. Would the only benefit for an ISCSI setup be ease of use and not have to carry around my HDD to each station or is there another reason why ISCSI is better? If it is only for ease of use I would rather have the portable drive and suck it up rather than drop a few hundred(atleast) on an ISCSI setup. Thank you

-

I currently have a NAS setup as an ISCSI drive which all my games and some programs are installed on this is a single drive setup. Does anyone know what kind of performance increase I would see if I was to add a second drive in RAID0. I have a Gigabit connection to the NAS so the network is not a bottleneck at the moment. Automatic backups are done twice daily so I'm not worried about redundancy; I'm just interested in whether it would be worth the money to add a second drive to improve the performance.

-

Having set up an iSCSI target on my Synology NAS box, I would like to ensure that all data on that target is encrypted. Truecrypt sees the iSCSI target, and is able to run through the "volume creation of non system partition" fine enough. What i would like to know is: 1. Is there anything inherently insecure about using Truecrypt/iSCSI in this manner? 2. Where is the encryption happening? I.e. If is data encrypted by Truecrypt BEFORE being sent to the iSCSI target? Or is data transfered insecurely/raw to the iscsi target THEN encrypted? Which would leave traffic open to sniffing? 3. I would rather use truecrypt locally on my PC than have to go about compiling/installing truecrypt on my NAS. Thanks!

- 2 replies

-

- encryption

- iscsi

-

(and 1 more)

Tagged with:

-

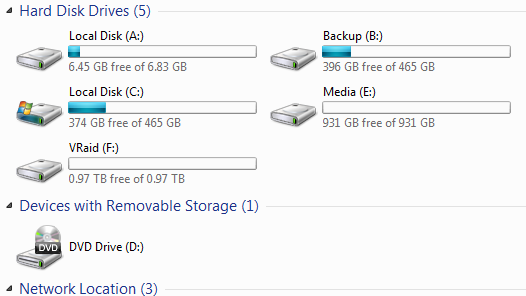

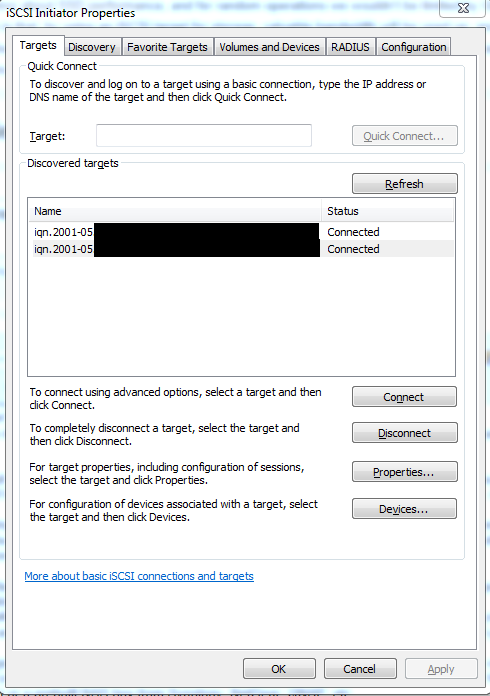

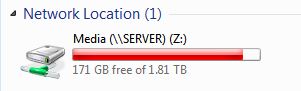

An Introduction to Storage Networking SAN, NAS, networking, oh my! I see lots of talk about NAS setups in the storage section. Much of it revolves around increasing storage performance through caching and complex RAID arrays, but there's less discussion about the networks they're connected to. If your storage is high-performance, then your network is usually the next bottleneck in the system, especially when multiple users are connected to a NAS. There are a couple of concepts that I'll mention, starting with the idea of a Storage Area Network: A SAN is a network which provides access to storage. Traditional implementations in the enterprise environment will use a dedicated network, and the storage is consolidated into a SAN appliance. Depending on the implementation, a SAN might be sharing individual hard drives, or volumes spread across multiple hard drives. The network also doesn't matter, but requires hardware and software support. Two of the more common ones are Fibre Channel and iSCSI. Fibre Channel uses fibre cables which provide lower latency than iSCSI, which is typically run over copper cabling using SFP+ or RJ45 connectors. In addition, data is transferred in block form, rather than in file form, and the storage devices appear as local devices. Can you tell which of these are physical hard drives and which ones are SAN storage? [spoiler=Answer] Here, the Media drive and the VRAID drive are iSCSI targets. These ones are coming from two separate SAN appliances in our lab. This allows for all sorts of neat functionality. Firstly, it allows you to perform Windows Backups to the machine over a network without owning Windows 7 Professional. It also allows you to install programs to remote storage. That remote device, if it's a part of a RAID-protected volume, will be much safer than a single hard drive. Also, since the data is stored in block form, you can format an iSCSI target with whatever format your file system can support. Next is networking protocols. I won't talk about Fibre Channel since setting up an infrastructure is very expensive and specialized, and really only useful for the enterprise environment. However, I will discuss iSCSI, which has risen in popularity over the years and is usable in consumer environments. SCSI is an interface that allows one to directly attach storage appliances to servers, where they appear like local storage. iSCSI takes it one step further, making the protocol talk over a network rather than through a dedicated cable. You can use iSCSI on almost any computer in existence. If you're running Windows 2000 or later, your OS comes with an iSCSI initiator by default. Linux has an iSCSI driver, FreeBSD has iscontrol, and you can get one for OS X (doesn't come with one by default). 4) Another issue with having data served over iSCSI is that, should someone else do something intensive, your performance can also be affected. If your parents start doing a backup to the SAN, then you will be affected, which is annoying when trying to play a game. This could manifest as long loading times, or you could lag in-game because your program needed to access files on your storage. Either way, it's an inconvenience to you. Solution: This is the most common problem with iSCSI, and the solution is to have this type of traffic routed over a dedicated network so that when your parents go to back up their computer, it will be over a different network connection, and you won't have as many issues. If your storage isn't adequate, you can still be affected. This feature isn't really doable with a prebuilt system, but most enterprise environments will use equipment that can do it. You can also build one yourself, using multiple network cards to split traffic between a public network and your SAN network. Now let's talk about NAS. A NAS is similar to appliances connected to a SAN, but instead of appearing as a local device it appears as a networked device. It's what you see when you map a network drive in Windows. The advantage of this is that a data store can be shared with multiple users, rather than. In addition, they're usually attached to an existing network of computers (sometimes called the 'client' network), rather than on a separate network.

- 19 replies

-

- storage

- networking

-

(and 8 more)

Tagged with:

-

This post is for mid/large enterprise NAS and/or iscsi solutions. If you have a home solution, please visit the other storage post: http://linustechtips.com/main/topic/21948-ltt-10tb-storage-show-off-topic/ To Do: Post up you $5k++ solutions. Its nice to see this grade because its the geeks home dream setup, but enterprise grade (heck if you have an enterprise setup at home, post up). To Do: Post pics or videos of your setups at work/home. It would be nice to see $8-15k NAS work storage but if you have a few hundred thousand dollar setup, post it and make the High Roller TB list. TB High Roller List ------------------------- Username | TB # 1.) 2.) 3.) 4.) 5.) Drive Max Capacity is measured in total TB's = 4, 1tb drives is 4tb total space

-

Looking for a storage solution that would fit our needs as a primary backup target using Veeam. We'd like to have around 40tb usable on the appliance to fit all of our backup needs. We'd probably use the dedupe and compression methods on the Veeam side for any space savings. We're currently looking at the Netapp E series box and received a quote of $15k Canadian for 40tb. Is there anything cheaper out there that would suite our needs? Nothing fancy, just need to dump our nightly veeam backups, restore once in awhile, and that's pretty much it. Thanks

.png.a3d30828aa8e16db9cffc0269669c259.png)

.png.01006f8c9f3ae82d0c0b24eb828067e1.png)

.png.4862a2b6b279bb4106d87e15e01eb643.png)

.png.3e6aa179147b49e6f58cdd3b70ca7110.png)

.png.e3f5d740d9c131f09ee5d0cab65e1f78.png)

.png.5d211830b47c251f050c41c3ce31e560.png)

.png.acef2f61309265f78bab5249c3425d77.png)

.png.ebfbf1fda48f4723c4b0d9e579b4913e.png)

.png.d73edc984084851b5043ba63086538b1.png)

.png.6f82c5f4cff56ea11f97c62237fc4dae.png)

.png.5a97e6ed6af59cc04506b1c8ed6a8fdc.png)

.png.61543e60e0b7d71fe4d471945e49c50b.png)

.png.73f2f4c7eef8769f039e5fecfad83249.png)

.png.7cf1677d78188e0aa0c6d14692e38722.png)

.png.570b62c7d4b787eafd20f69210a42b9f.png)

.png.3bf32b87392af0a4e2f42110781c7a3a.png)

.png.23194ef57dc7e209231b30eb0ec4c173.png)

.png.e2a4fd6497c6e816bc2343aa632455cb.png)

.png.4d5f760a255f1943f10e04ad39fd7e63.png)

.png.e0bd8979f9369bbe62c602840fcf1efd.png)

.png.e37883e64368cb5f9a7ba2952df8fc37.png)

.png.2de7b93ccb3a05bd1a3eb57502d6e886.png)

.png.ba5bf1e3551714e914e7cdc3ee3e926a.png)

.png.b536645432690a501e1e248b66ac33f5.png)

.thumb.png.cbc140d09fd8cfeebaedba2af2ceb30b.png)

.thumb.png.0ce9ee0c3798bbb39e38dd2a9201d33d.png)

.thumb.png.31bdeaac5fcd6da65a2dfa2a6ceae2f6.png)

.thumb.png.4cb8cf440037366c6de85c6ff8057ee5.png)