Search the Community

Showing results for tags 'redundant'.

-

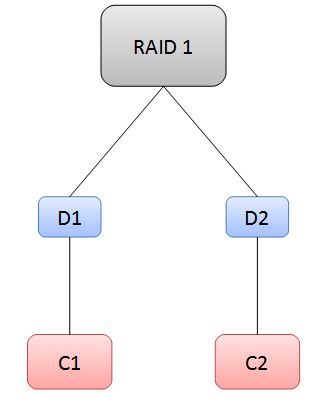

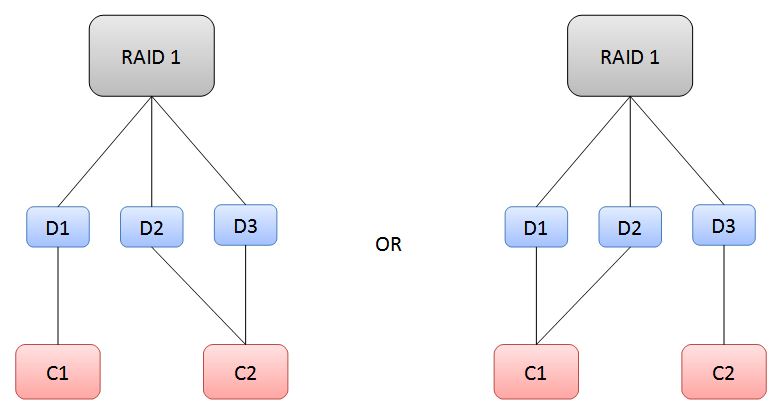

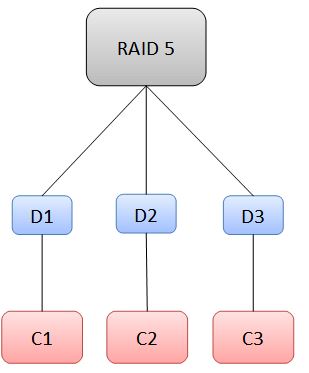

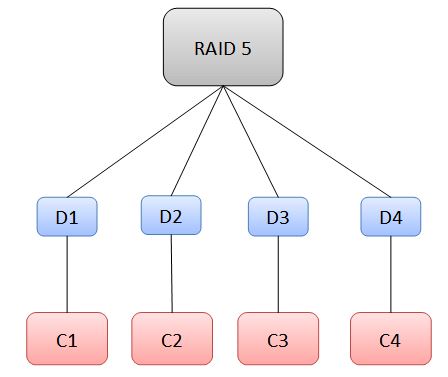

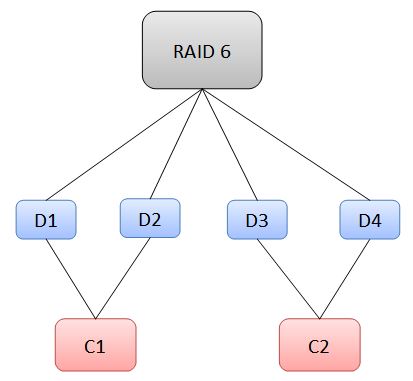

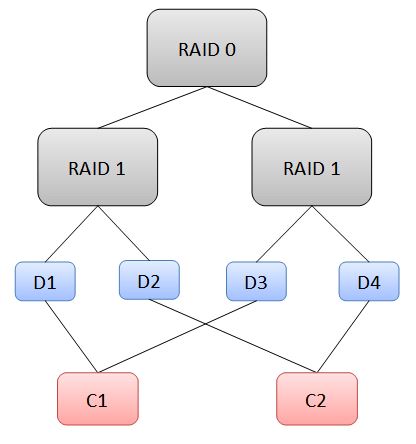

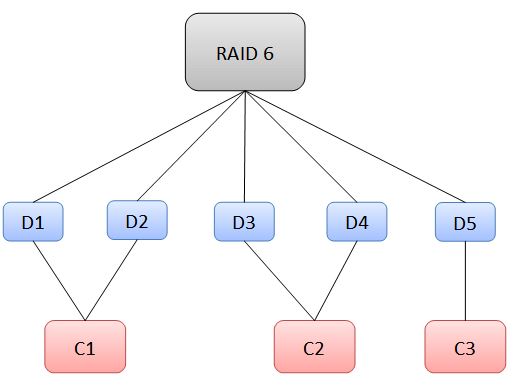

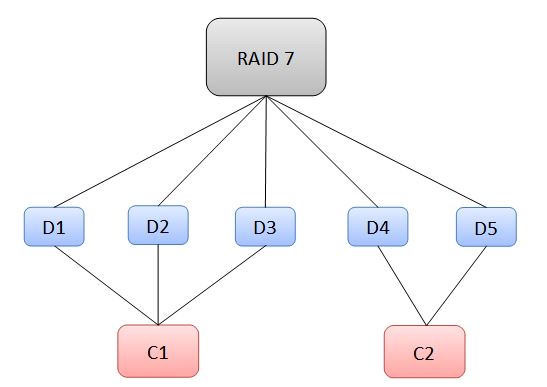

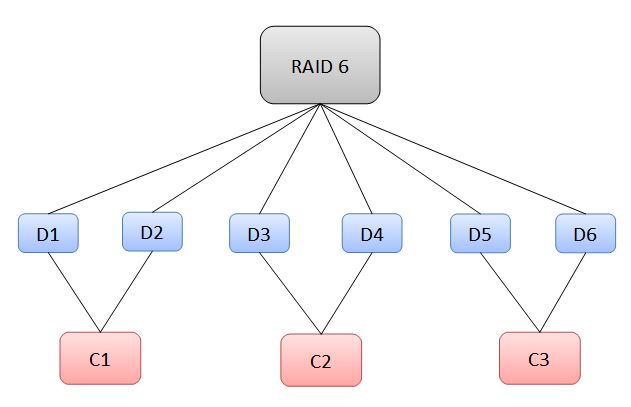

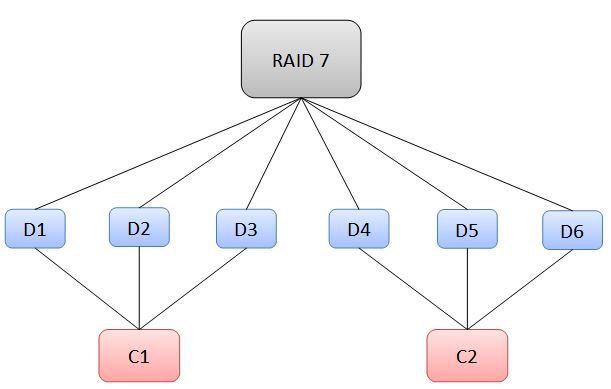

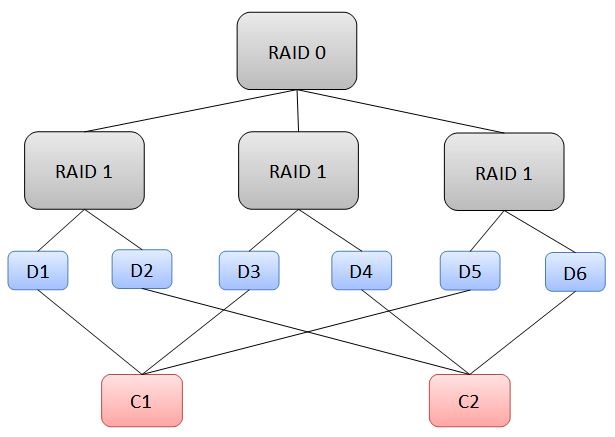

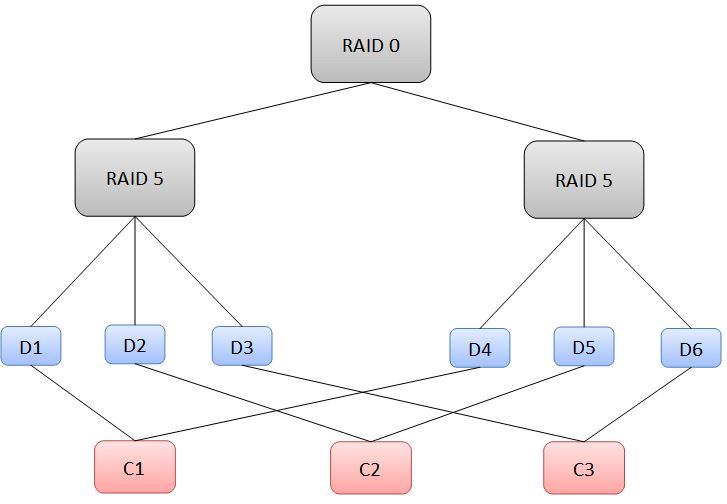

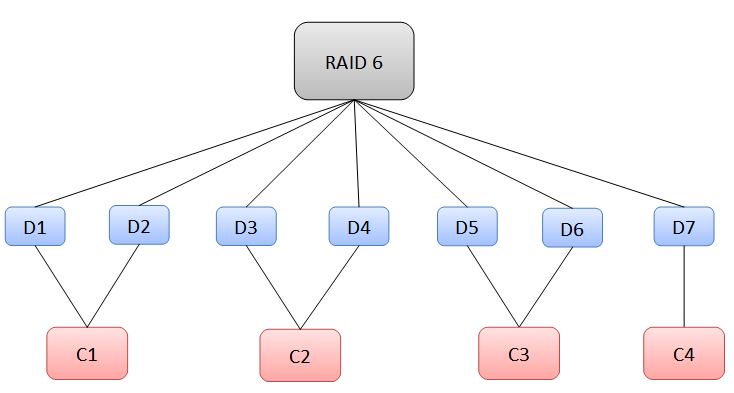

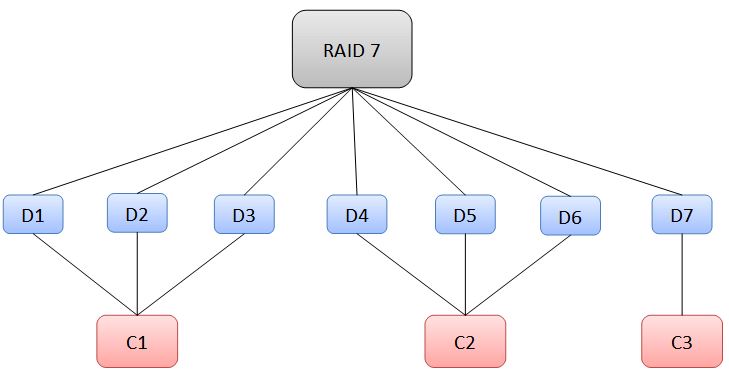

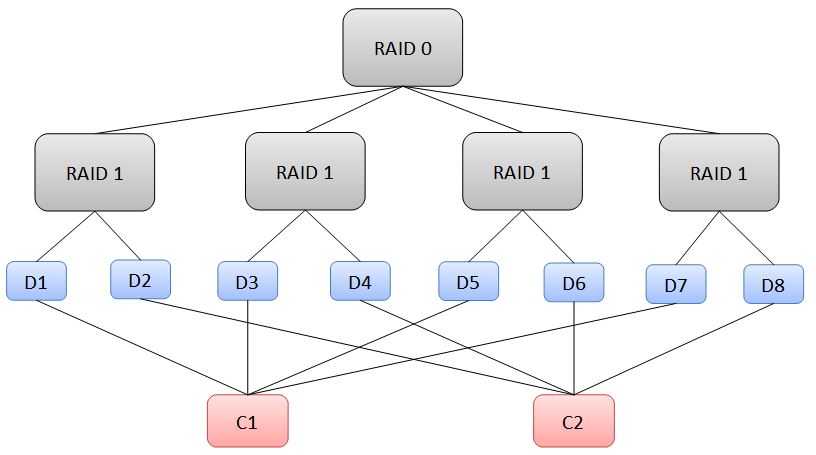

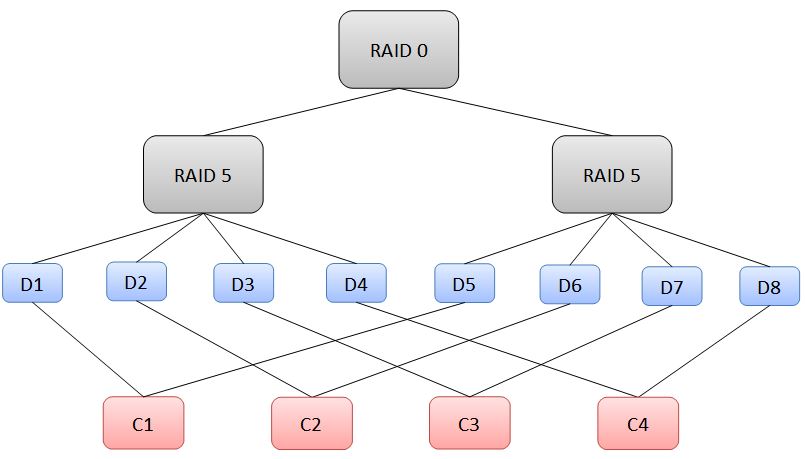

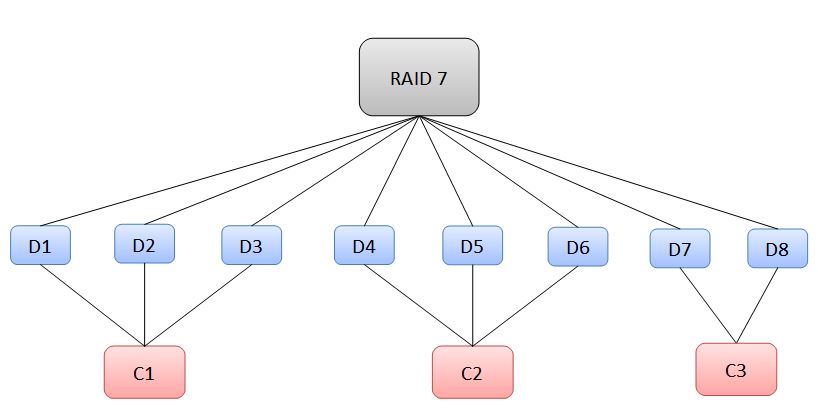

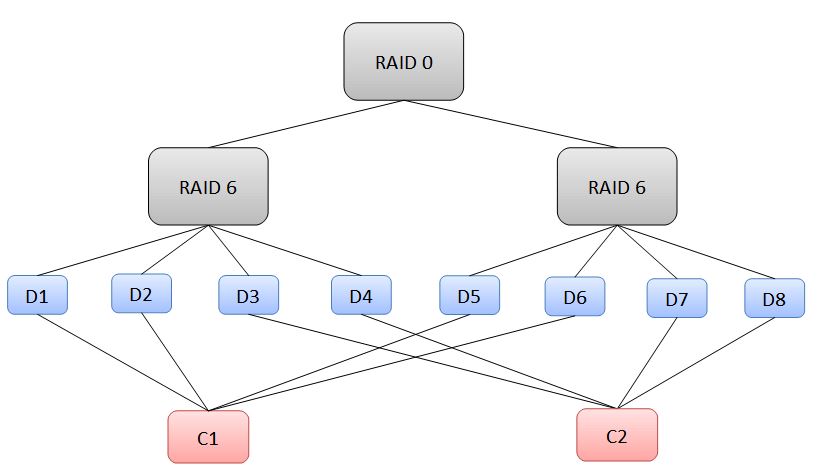

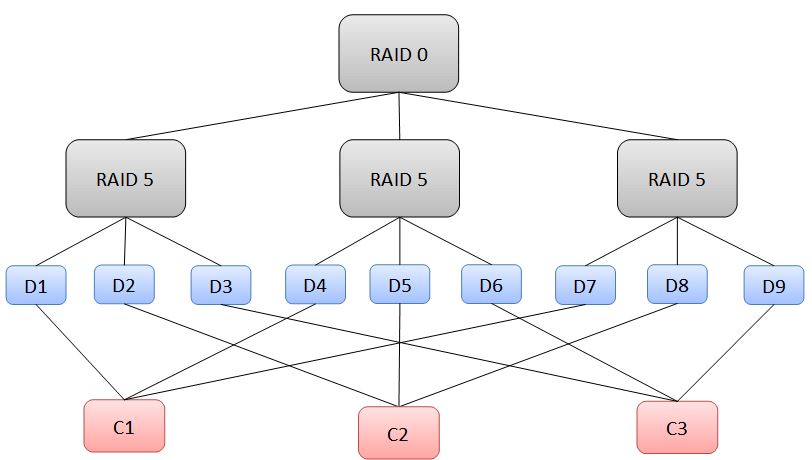

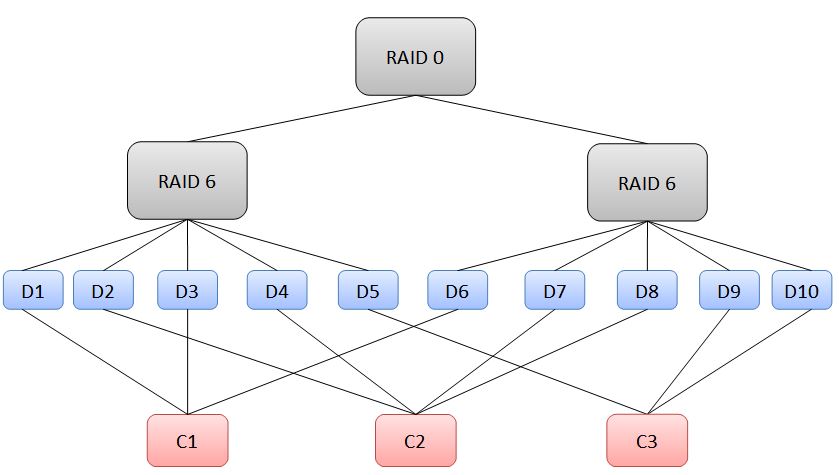

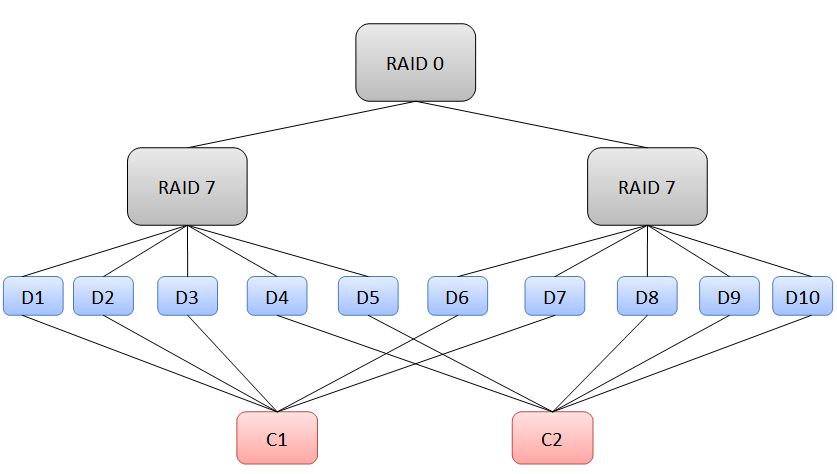

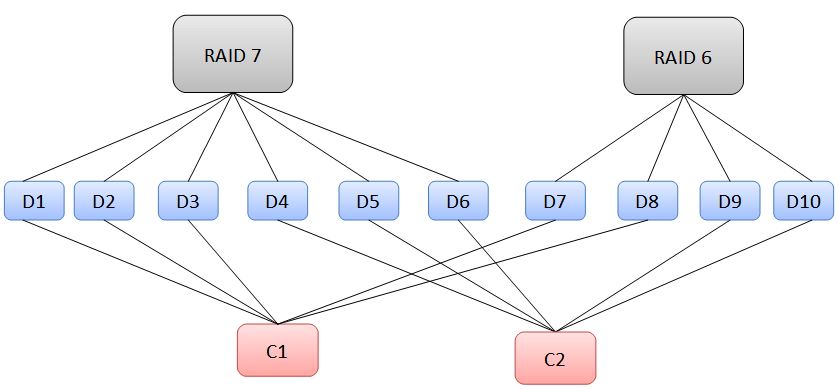

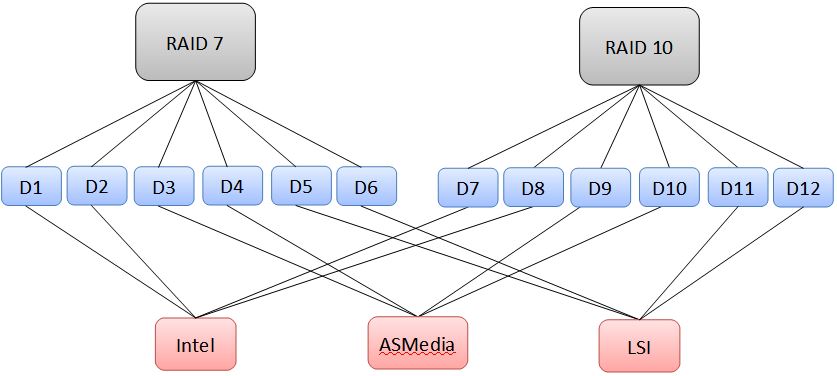

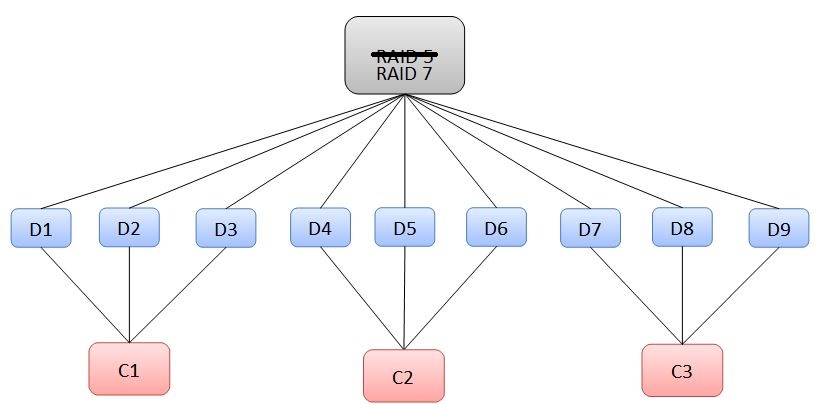

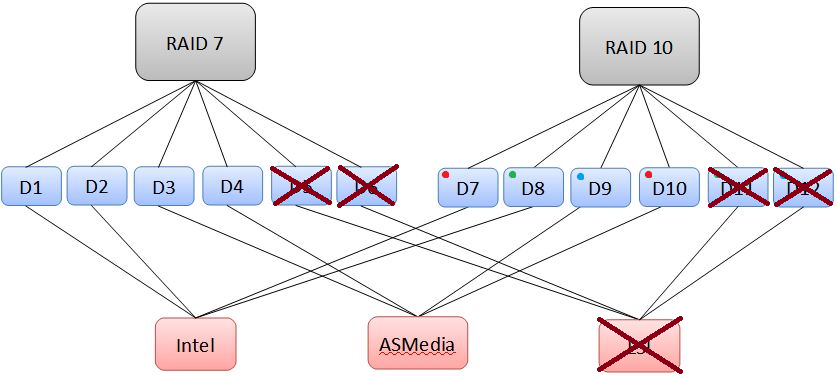

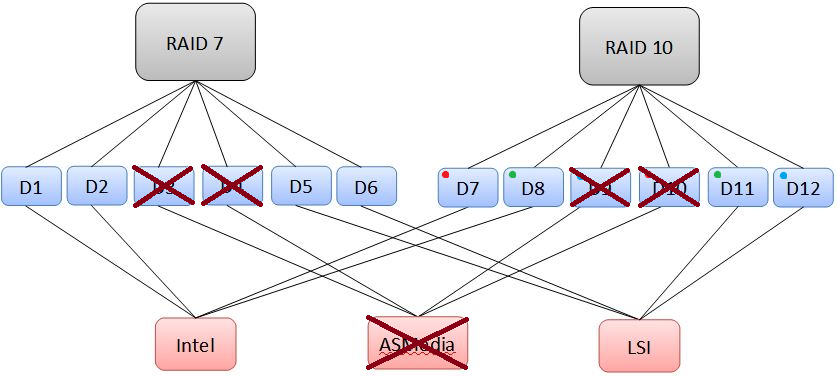

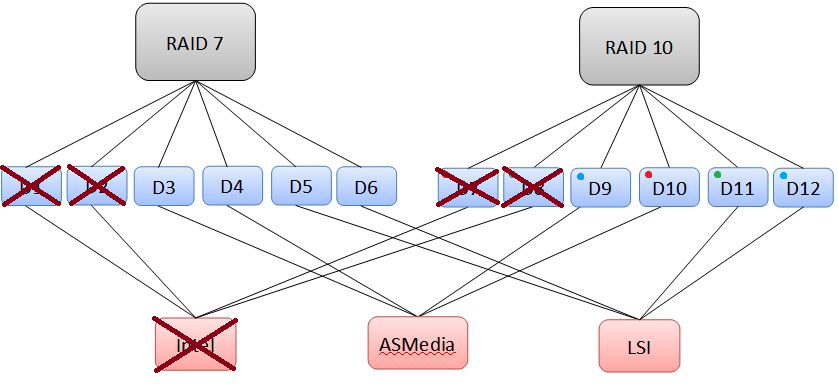

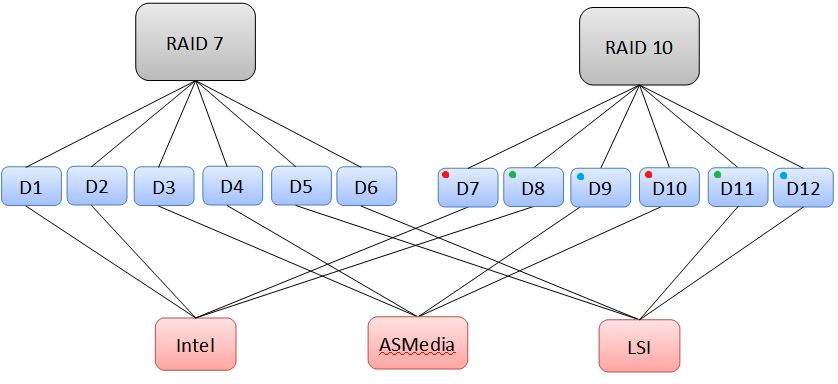

Reducing Single Points of Failure in Redundant Storage In lots of the storage builds that exist here on the forum, the primary method of data protection is RAID, sometimes coupled with a backup solution (or the storage build is the backup solution). In the storage industry, there are load of systems that utilize RAID to provide redundancy for customers' data. One key aspect of (good) storage solutions is being resistant to not only drive failures (which happen a LOT), but also failure of other components as well. The goal is to have no single point of failure. First, let's ask: What is a Single Point of Failure? A single point of failure is exactly what it sounds like. Pick a component inside of the storage system, and imagine that it was broken, removed, etc. Do you lose any data as a result of this? If so, then that component is a single point of failure. By the way, from this point forward a single point of failure will be abbreviated as: SPoF Let's pick on looney again, using his system given here. Looney's build contains a FlexRAID software RAID array, which is comprised of drives on two separate hardware RAID cards running as Host Bus Adapters, with a handful of iSCSI targeted drives. We'll just focus on the RAID arrays for now, since those seem like where he would store data he wants to protect. His two arrays are on two separate hardware RAID cards, which provide redundancy in case of possible drive failures. As long as he replaces drives as they fail, his array is unlikely to go down. Now let's be mean and remove his IBM card, effectively removing 8 of his 14 drives. Since he only has two drives worth of parity, is his system still online? No, we have exceeded the capability of his RAID array to recover from drive loss. If he only had this system, that makes his IBM card a SPoF, as well as his RocketRaid card. However, he has a cloud backup service, which is very reliable in terms of keeping data intact. In addition, being from the Kingdom of the Netherlands, he has fantastic 100/100 internet service, making the process of recovering from a total system loss much easier. See why RAID doesn't constitute backup? It doesn't protect you from a catastrophic event. In professional environments, lots of storage is done over specialized networks, where multiple systems can replicate data to keep it safe in the event of a single system loss. In addition, systems may have multiple storage controllers (not like RAID controllers) which allow a single system to keep operating in the event of a controller failure. These systems also run RAID to prevent against drive loss. In systems running the Z File System (ZFS) like FreeNAS or Ubuntu with ZFS installed, DIY users can eliminate SPoFs by using multiple storage controllers, and planning their volumes to reduce the risk of data loss. Something similar can be done (I believe) with FlexRAID. This article aims to provide examples for theoretical configurations, and will have some practical real-life examples as well. It also will outline the high (sometimes unreasonably high) cost of eliminating SPoF for certain configurations, and aim to identify more efficient and practical ones. Please note: There is no hardware RAID control going on here, all software RAID. When 'controllers' are mentioned, I am referring to the Intel/3rd party SATA chipsets on a motherboard, an add-in SATA controller (Host Bus Adapter), or an add-in RAID card running without RAID configured. The controllers only provide the computer with more SATA ports, and it is the software itself which controls the RAID array. First, lets start with hypothetical situations. We have a user with some drives who wants to eliminate SPoFs in his system. Since we can't remove the risk of a catastrophic failure (such as a CPU, motherboard or RAM failure), we'll ignore those for now. We can, however, reduce the risk of downtime due to a controller failure. This might be a 3rd party chipset, a RAID card (not configured for RAID) or other HBA which connects drives to the system. RAID 0 will not be considered, since there is no redundancy. Note: For clarification, RAID 5 represents single-parity RAID, or RAID Z1 (ZFS). RAID 6 represents dual-parity RAID, or RAID Z2. RAID 7 represents triple-parity RAID, or RAID Z3. Note: FlexRAID doesn't support nested RAID levels. [spoiler=Our user has two drives.] Given this, the only viable configuration is RAID 1. In a typical situation, we might hook both drives up to the same controller and call it a day. But now that controller is a SPoF! To get around this, we'll use two controllers, and set up the configuration as shown: Now, if we remove a controller, there is still an active drive that keeps the data alive! This system has removed the controllers as a SPoF. [spoiler=Our user has three drives.] With three drives, we can do either a 3-way RAID 1 mirror, or a RAID 5 configuration. Let's start with RAID 1: Remembering that we want to have at least 2 controllers, we can set up the RAID 1 in one of two ways, shown below: In this instance, we could lose any controller, and the array would still be alive. Now let's go to RAID 5: In RAID 5, a loss of more than 1 drive will kill the array. Therefore, there must be at least 3 controllers to prevent any one from becoming an SPoF, shown below: Notice that in this situation, we are using a lot of controllers given the number of drives we have. Note also that the more drives a RAID 5 contains, the more controllers we will need. We'll see this shortly. [spoiler=Our user has four drives.] We'll stop using RAID 1 at this point, since it is very costly to keep building the array. This time, our options are RAID 5, RAID 6 and RAID 10. We'll start with RAID 5, for the last time. Remembering the insight we developed last time, we'll need 4 controller for 4 drives: This really starts to get expensive, unless you are already using 4 controllers in your system (we'll talk about this during the practical examples later on). Now on to RAID 6: Since RAID 6 can sustain two drive losses, we can put two drives on each controller, so we need 2 controllers to meet our requirements: In this situation, the loss of a controller will down two drives, which the array can endure. Last is RAID 10: Using RAID 10 with four drives gives us this minimum configuration: Notice that for RAID 10, we can put one drive from each RAID 1 stripe on a single controller. As we'll see later on, this allows us to create massive RAID 10 arrays with a relatively small number of controllers. In addition, using RAID 10 gives us the same storage space as a RAID 6, but with smaller worst-case redundancy. Given four drives, the best choices look like RAID 6and RAID 10, with the trade-off being redundancy (RAID 6is better) versus speed (RAID 10 is better). [spoiler=Our user has five drives.] For this case, we can't go with RAID 5, since it would require 5 controllers, and can't do RAID 10 with an odd number of drives. However, we do have RAID 6 and RAID 7. We'll start with RAID 6: Here we need at least 3 controllers, but one controller is underutilized: For RAID 7, we get 3 drives worth of redundancy, so we can put 3 drives on each controller: In this case, we need two controllers, with one being underutilized. [spoiler=Our user has six drives.] We can now start doing some more advanced nested RAID levels. In this case, we can create RAID 10, RAID 6, RAID 7, and RAID 50 (striped RAID 5). RAID 10 follows the logical progression from the four drive configuration: RAID 6 becomes as efficient as possible, fully utilizing all controllers: RAID 7 also becomes as efficient as possible, fully utilizing both controllers: RAID 50 is possible by creating two RAID 5volumes and striping them together as a RAID 0: Notice that we have reduced the number of controllers for a single-parity solution, since we can put one drive from each stripe onto a single controller. This progression will occur later as well, when we start looking at RAID 60 and RAID 70. We can obviously do a RAID 10, but now we can also venture into the realm of RAID 70 (striped RAID 7), in addition to RAID 60. Our RAID 60 is slightly underutilized: Finally, we have our RAID 70: Our RAID 70 is relatively inefficient (using 12 drives would be better, with six drives per controller). However, it allows for increased performance in addition to providing a huge amount of redundancy for this setup. This is definitely not recommended for anything other than mission-critical data or perhaps priceless memories (wedding video/photos, important docs, etc.) In this case, we can do either a RAID 50 or RAID 7, both of which require 3 controllers: For RAID 50, we have 3 stripes of RAID 5: For RAID 7, we have 3 controllers which are all fully utilized: Both configurations give us 6 drives worth of space for 3 controllers, with the tradeoff, once again, being worst-case redundancy (RAID 7 is better) versus speed (RAID 50 is better). For configurations with more drives, we aren't going to look at RAID 7, since we'll require more controllers. With eight drives, we can jump into the realm of RAID 60 (striped RAID 6 volumes). We also have the options of RAID 10, RAID 50, and RAID 7. RAID 10 looks just like it did before: RAID 50 looks similar, but now we need more controllers since each volume has more drives: RAID 7 looks pretty similar too: RAID 60 looks pretty efficient with this number of drives. Logically progressing from RAID 50, we can have two drives per stripe on one controller, for 4 drives per controller. This lets us use only two controllers: Like we had for four drives, we now have a tradeoff between worst-case redundancy and speed between RAID 60 and RAID 10.

-

Hey all, Does anyone have a good recommendation for a redundant PSU for a critical 24/7 server? I need something that is also able to fit in a standard ATX slot; this is my case: https://www.newegg.com/black-rosewill-rsv-l4500/p/N82E16811147164?Item=N82E16811147164. Thanks

-

Hey guys. I was wondering if anyone could help me figure out how to set up a backup solution in my home. I recently lost about 4 years of data, and I have some spare cash, so good a time as any I suppose. I want it to be reliable, so I did some research and I feel comfort in RAID10, for it's mirroring and striping. I have never worked with any sort of backup solutions like this, so I don't know where to begin. What drives should I get, should it be a physical drive backup, or should I build a home server to facilitate it as a NAS? Any help at all is appreciated, advice as well, as my knowledge here is nearly zero. P.S. I'd appreciate you keep this semi professional. I'm not a fan of asking for sincere help and receiving backlash like "that's stupid, why would you do that". Thanks.

-

Does anybody know of a redundant, SFX form factor, PSU?

-

Hi, I would like to have a Linux web server run redundantly. I did research, but that was nothing. My question was whether there is someone who has experience with this and wants to give me some information. It is a Ubuntu server on which a website must run. For this website I also need a database, which must also be redundant. Thank you!

-

I was looking at making a FreeNAS server and looking at a boot drive, they say you can use a USB stick. If the USB stick dies, will it lose or corrupt data on the main drive? If that is the case, is it a good idea to use redundant drives? If not redundant USB drive running in RAID 1? Thank.

-

I've recently gotten a case with a big space for redundant power supplys: This isn't my exact case, and the plate with the two psu mounts is missing. Anyone know something I could use to adapt from the mounting holes that exist for a modular psu to atx? Bonus points if you have an idea for the hole above it as well, as I'm pretty sure I'll only need one power supply. EDIT: I've found what I need (sortof), but I still can't find a place where I can purchase something like this separately:

-

http://www.newegg.com/Product/Product.aspx?Item=N82E16817338062&cm_re=redundant_power_supply-_-17-338-062-_-Product I was looking into getting a redundant power supply for my workstation and wasn't sure if this would fit in your average case.

-

So just recently I did a clean install of Windows 10. Right after I installed it, I immediately downloaded the latest driver for my graphics card from the NVidia website. The problem is, when I see what Windows Update is downloading for me, it includes the "latest" driver software of my graphics card. Saw the details and then I learned that it was a more outdated one than what I downloaded (May 2 update) I haven't installed the new graphics card drivers I downloaded yet because I might get some trouble with the ones Windows is downloading for me. Anyone had the same issue here?

-

I have an Intel server (link) with a redundant 750w PSU (link) and want to add a 6 and 8 pin PCI-E connectors to power a GPU. (and a link to the current specs of the server) IS it possible to solder in the 6 and 8 pin PCI-E connectors to the existing power supply (conversion box not the supplies box not the supplies) or would the better alternative be to run the server off of a sff PSU? If so, recommend a reliable and efficient supply that won't care if the system is on 24/7.

-

So we finally got plans how we are going to digitalize our VHS and fotos. We expect to get about 6TB of data, as we want the highest possible quality. Plus we have around 2TB of data on all of our devices plus already digital images and family videos on DVDs with again about 1TB of data. This gives a total of around 10 TB of storage needed currently. The thing we plan is a NAS to acces all our data at home on every device and some files with user restriction. Then we also need a backup system, as we store backups on DVDs or external HDDs, which is not an option with growing data amounts. As I am a completly noob regarding NAS and Backup solutions, I only know, that my budget is max 650 Euro and that prebuild NAS solutions for consumers are not enough and not redundant enough. I intend to built a NAS in something like Fractal designs Node 304, a cheap Core i3 and an ITX mainboard. It has to be "safe" and safe to turn off accidently, as I do not trust my parents that much in such things.... And as for power consumption, we woould like to shut it completly off, when not in use or at night. Then again we need a backup solution which is only on if we make backups. It has to be redundant and failsafe, as we will store the same data as on the NAS and backups of our devices. I even would consider to make backups on HDDs in external cases and store them safely, so it is more important that we get a NAS. (e.g: I would make backups on two different drives at once and put them back in my "IT shelf", which means I buy will migrate our infrastructure slowly and step-by-step -> buying HDDs if one is at 95% capacity.) So back to the NAS: What HDDs do I need, what cooling, what CPU (with iGPU), PSU, what software? Note that I am new to NAS and home servers, but do have quite some expereince with Ubuntu. As I do not know that much about this topic, I wrote a little bit "weird". I would start building the NAS by the end of April and we intend to start the complete digitalization my rig first, and use some NAS drives already in it, as 120GB SSD+1TB HDD are not enough at all.... Thanky you in advance.

-

Case Study: RAID Tolerance to Failure In this post I talked a lot about the pros and cons of running a RAID array, explained the different RAID levels, and some more specific aspects of RAID. In this snippet, I'd like to take a closer look at the tolerance of a RAID array to drive failures. To do this, let's consider the storage configuration given here, by our own looney: Looney has a RAID volume with 14 2TB drives, and he wants to use it for storage of media and his computer backups. Let's consider the effects of various RAID levels on this volume, and what the pros and cons are of each one. RAID 0: Without a doubt, this would be the fastest configuration. 14 drives in RAID 0 would give higher random performance and insane sequential performance. But what happens if a drive fails? Well, since a RAID 0 array has no tolerance to failure, he'd lose all his data. Best Case: Not Applicable Worst Case: Any one drive failure Tolerance: None Space: 28TB If he was using the RAID array as a recording disk, he might want a RAID 0 volume. But media playback performance wouldn't be impacted much with a RAID 0, and his home network would be bottlenecked at ~125 MB/s with gigabit Ethernet. Plus, that puts his entire movie collection at risk, as well as losing all his backups. Clearly, this isn't the choice for him. RAID 1: Without a doubt, this would be the safest configuration. 14 drives in RAID 1 would have okay performance in both writes and reads. It can also sustain the loss of up to 13 drives, so he wouldn't have much to worry about. Best Case: Not Applicable Worst Case: All drives fail Tolerance: 13 drives. Space: 2TB The problem with this configuration is that he doesn't end up with much space, so he can't have much of a media collection or many backups of his computer. This also isn't the configuration for him. RAID 5: This is the most efficient configuration. 14 drives in RAID 5 would have good read performance, but would take a hit in write performance. It can also sustain the loss of any one drive, so he has less to worry about as far as data reliability goes. Best Case: Not Applicable. Worst Case: Any two drives fail. Tolerance: 1 drive. Space: 26TB With this configuration, looney gets his space, and some redundancy. This wouldn't be a bad choice for him, but let's see what else there is. RAID 10: This configuration wouldn't impact write performance at all, and would give us some redundancy. 14 drives in RAID 10 is 7 RAID 1 arrays striped together, so we get pretty beast performance. However, in this case, redundancy has a best and a worst case. For instance, if every drive we lost came from a different RAID 1 stripe, we could lose up to 7 drives, because each stripe would still have another drive to keep it going. On the other hand, if we lose two drives from the same RAID 1 stripe, the entire RAID volume fails. Best Case: 7 drives fail, one from each stripe. Worst Case: Two drives from the same stripe fail. Tolerance: 1-7 drives. Space: 14TB With this configuration, looney gets good performance, but only gets half his maximum space. While it's true that he could tolerate a large number of drive failures, he might want to have a higher tolerance for the worst case scenario. RAID 6: Basically a safer version of RAID 5, using two drives for parity calculations. 14 drives in RAID 6 would have good read performance, but would take a very large hit in write performance. It can also sustain the loss of any two drives, so he has even less to worry about as far as data reliability goes. Best Case: Not Applicable. Worst Case: Any three drives fail. Tolerance: 2 drives. Space: 24TB With this configuration, looney gets lots of space, and even more redundancy. This would be a great choice, since much of what he does is read from his array, and backups can be run overnight, making the write performance less of an issue. RAID 50: This configuration would gives us increased performance over RAID 5, and would still gives us some redundancy. 14 drives in RAID 50 is 2 RAID 5 arrays striped together, so we get better read and write performance. Once again, redundancy has a best and a worst case. For each RAID 5 stripe we can lose a maximum of 1 drive. If we lost two drives, one from each stripe, we would still have our array. However, if we lost two drives from the same stripe, we would lose the whole RAID volume. Best Case: Two drives fail, one from each stripe. Worst Case: Two drives from the same stripe fail. Tolerance: 1-2 drives. Space: 24TB With this configuration, looney gets the same minimum tolerance as RAID 5, and more performance at the cost of another hard drive. This isn't a bad configuration, but it would require some advanced hardware to do, and it depends on his desired tolerance. RAID 60: This configuration would gives us increased performance over RAID 6, and would still gives us the redundancy of RAID 6. 14 drives in RAID 60 is 2 RAID 6 arrays striped together, so we get better read and write performance. Aaaand, on to redundancy. For each RAID 6 stripe we can lose a maximum of 2 drives. If we lost four drives, two from each stripe, we would still have our array. However, if we lost three or more drives from the same stripe, we would lose the whole RAID volume. Best Case: Four drives fail, two from each stripe. Worst Case: Three drives from the same stripe fail. Tolerance: 2-4 drives. Space: 20TB With this configuration, looney gets the same minimum tolerance as RAID 6, and more performance at the cost of two more drives. Like RAID 50, this requires advance hardware to do, but still gives us the redundancy we'd like. ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- With all this in mind, how do we choose a RAID volume? We know that one requirement is that it should be redundant, since he's storing lots of data that would be hard to replace, plus backups which would be bad to lose if another of his computers went down. We also know that having high performance isn't necessary, and that he needs lots of storage space. Given these requirements, we can pick RAID 5, 6, 50, and 60 as our viable candidates. So which one should he go with? SPOILER ALERT: He chose RAID 6. Based on his choice, he might not have wanted to go with a hardware solution (expensive, and a single point of failure), or didn't need the performance of a hardware solution to provide RAID 50 or 60. He also might have wanted the redundancy of RAID 6 over that of RAID 5. ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- Final Thoughts: Looking at striped volumes, I think it's useful to recognize that striping nested RAID volumes does not increase worst-case redundancy, only performance, and at the cost of more drives. In a striped RAID, your array is only as redundant as the lowest RAID level. In RAID 50, that's RAID 5. In RAID 60, that would be RAID 6. In RAID 10, that's RAID 1. When chance is on your side, a striped redundant array can provide additional benefit. But for those who want to be absolutely sure, it doesn't help much. Nesting RAID volumes can increase redundancy, unfortunately there are no hardware RAID cards that support such RAID arrays, like a RAID 1 array of nested RAID 6's (RAID 61?). That would provide 4 drives worth of redundancy, but in looney's case, he would only get 10TB of space (7 drives per RAID 6, 5 drives of space available per RAID 6, only get 1 RAID 6 worth of storage). RAID 65 (RAID 5 of nested RAID 6 volumes) would be intense, but he'd only be able to use 12 drives (4 drives per RAID 6, 3 RAID 6's for the RAID 5), and would get two RAID 6's worth of space, for a total of 8 TB. Also, good-bye to write performance.