Search the Community

Showing results for tags 'raid'.

-

Kind of a simple question but not really. I have a 2U server in my basement with datacenter 2k22 on it, has a bunch of roles and its in a forest with a few old laptops. nothing "too" special about those machines, just backups for the domain, ADDS (active directory) and crucial/barebones "helpers" for the server. anyway so i got a bunch of stuff that can go into cold storage but obviously i dont wanna pay or use external services. simple reasons for this, potential cyber-incidents with X company, but most of all human error. say for example i setup autopay and i lose my card then forget, well my failsafe data storage is in limbo. given my email is packed with junk most of the time it would be something easily overlooked. maybe the data side on the server would be helpful -16x pcie 4.0 nvme uh, thing. has 4 nvmes in it all kingston fury's at 1tb on raid 1, bitrification or whatever -8x SAS drives, raid 10 all HDDs, seagate constellations, id like to say 4-8tb each? of course the capacities of all and models are the same. disk manager says 14g and some odd megs, so im thinking 4tb each -boot drive is irrelevent, but its a intel something enterprise ssd, guessing prob 128g or less, its on SATA -id like to say at least 4 samsung pro/qvo SSDs at 1tb each, raid 0, just used as temp storage then i shovel copies to other "drives" -a simple 4tb usb3 drive, seagate HDD -another simple 14tb HDD via usb3, that ones a 3.5 inch drive and only one, western digital all this junk are linked to the other pc's, network mapping whatever. nothing special. i deff dont wanna (or do i?) use msft storage spaces role, i forget what its called. basicallly it takes the free space of my other laptops/DC's and combines the drives. all that would mean is if one goes down on the network then poof. prob better off network mapping the laptops multiple drives independently per machine, one has 3, another 4, raid isnt setup yet but thats not a concern at the moment. all samsung 1tb evo/qvo drives. the slowest machine is still rocking a HDD, only a 2 core cpu so not much bother throwing money or anything at it. SO with all this stuff, any ideas for cold storage? apparently bit rot is a real thing so yeah. apparently making dvd-r backups have issues too - even if kept safe without scratches, bit rot yet again. debating on investing in one of those tape deck things, slow but often used for cold storage. but then the magnetic reel is always prone to "something" only reason for the inquiry is because duh we live in a digital world. isnt like going in the basement and busting out a shoebox full of photos is practical these days. my phone alone has ~72,000 photos on it. say i die in a car crash or something at least my daughter would figure out how to access pictures of her birth and my entire life prior and current. of course a crapload of movies, music and games too. man what was that film? oh lets check dads backup cache cause thats where it would be. cant find it online anymore. same goes with games, even after a while i check my stuff and say DAMN i forgot about this one! boom, now im playing Kingpin or Soldier of Fortune. im only 36 but who knows right? should something happen, my lifetimes worth of data could be passed on to my child. its a worthwhile thought, right? any ideas out there? id "burn" vinyl records with .rar files if i could, aside from warping at least it isnt magnetic storage...?

-

I just want to hear it straight out from someone who has done this before. What is the best raid configuration for HDDs prioritizing speed over redundancy? I hear constantly that HDDs are terrible ideas for NAS but if configured properly they can have good speed? Is it possible to saturate a 2.5gigabit or even 10gigabit connection with the right raid configuration with HDDs?

-

I work for "Insert Major Company Here", and we are moving warehouses and scrapping out old inventory that hasn't moved. So, I have acquired two National Instruments HDD-8265's that I would love to be able to set up in my rack at home as a JBOD, however, they are PXI Chassis and that is way out of my wheelhouse. There is no motherboard, just an ARC1882IX-12/16/24 Ver. 3A Raid Card, which is connected to and being powered by, via a PCIx16 Slot Card OSS-ECA-1x4-1x6. This seems to be how it's intended to connect to the host system, however I don't have the cable for this, and it cost at best $700 for that.. I know the RAID Card is able to do what I want, however without a way for it to talk to a host system to set it up, it's basically just a brick. I had one idea, but I'd rather run it by someone who knows more than I about this than just spend any more time trying to rig a way around the inevitable. Q: Can I attach up this RAID Card to my home server in a free PCIe Slot, while leaving all the MiniSAS ports connected to the drives in the PXI Chassis, do the setup for the Raid Card in BIOS, them place it back into the PXI Chassis and use the Web Based UI to configure the rest? It makes sense in my head, but that could be due to a lack of insight. Only other thing I don't know how to accomplish in turning on the PXI Chassis itself, as it's designed to receive a signal from the Host PC, and does not have a Power On feature. Appreciate any insight, feedback, or knowledge. HDD-8265.pdf ARC1882_series_Quick_Start_Guide.pdf

-

Idk if that belongs here, but I have a question about unRAID and how it handles redundancy. I understand how RAID 5 works, the other disks can combine their data to form a new disk if one fails. But that's striped across the drives. I also understand that unRAID is fundamentally different and does not stripe data (fills disks individually) but I wanna know how some scenarios would work out. Imagine a setup like this on unRAID: The parity disk can be reconstructed since the data is still on the other disks. Disk A, B and C can easily fit on the parity disk. But what if disk D fails? Where is the parity for that one?

- 6 replies

-

- raid

- redundancy

-

(and 1 more)

Tagged with:

-

Over a year ago my home server's boot drives failed and since then I haven't gotten to setting it back up or recovering that data (busy with school). I now finally have some time on my hands and was trying to import the zpool from the old server on a new computer. One of the four drives I had in the zpool seems to have failed, how can I recover the data from this pool? or is it gone? $ sudo zpool import pool: Storage id: 9852532683918131691 state: FAULTED status: The pool was last accessed by another system. action: The pool cannot be imported due to damaged devices or data. The pool may be active on another system, but can be imported using the '-f' flag. see: https://openzfs.github.io/openzfs-docs/msg/ZFS-8000-EY config: Storage FAULTED corrupted data raidz1-0 DEGRADED c0b73961-6484-4bba-937c-17b861467448 ONLINE d9e047e6-efe4-492c-96c1-94912d1c824d ONLINE 8c3fff5f-c9a8-40c8-8e09-fac69e7dff2c UNAVAIL 299a799c-4d57-44c0-ab01-26a4b9699598 ONLINE $ sudo zpool import -fF Storage cannot import 'Storage': I/O error Destroy and re-create the pool from a backup source.

-

I have a gaming pc with an Intel I9 processor and and everything is intel compatible, but about a year after I bought it ,Windows Update keeps giving me a popup that says it can't update any further until I fix the raid issue..the pc works great otherwise. properties on the hard-rive show a AMD raid ..My pc has a M.2 with Windows on it and a sata drive with all the rest of my programs and data.... is it worth the time to fix and if so is there a way to fix it without reinstalling everything?

-

Is it possible to setup ZFS/Btrfs RAID with just 1 drive, and will just add more drives in the future? I currently just purchased 1 20TB IronWolf & planning on buying another 1 later this year for my Jellyfin/Nextcloud server. I plan on getting another 20TB NAS drive in the future, and i don't want to deal with erasing the drive, and just want to simply add the drive for and enable redundancy. Then add a 3rd for RAID 10.

-

So I just recently bought an 1tb M.2 NVME drive, and already have a 1tb HDD in my PC. I was wondering what the best use for these would be. I was thinking of setting up RAID 1 but I read in some places online that RAID can be buggy with NVME drives. Should I do it or is it better to just have 2tb of storage. I don't really have anything important worth backing up, but I don't really need 2tb of storage either.

-

I have a media Nas which simply stores video for a jellyfin server. It's not anything critical and can simply be recreated if lost, though it'd be annoying. I have the OS and programs on an m.2, and a small ssd i could use for cache with primo cache or something. The actual storage is 5x 4T HDD drives, so it seems perfect for a software raid5 setup but there's apparently been a 1000 year war on why raid is good or bad so I really can't find a consensus on what's good for my setup. A flavor of raid or windows storage spaces or whatever else. I'd much like to keep 16T of it usable or 12T at the least. If no style of striped schema works I'll just span them.

-

Hi Everyone, what are your experiences with external storages in Sonoma? Some of us are having serious issues, lost volumes, unmontable drives, data loss. https://discussions.apple.com/thread/255188289?sortBy=best https://forums.macrumors.com/threads/how-do-i-allow-access-to-removable-volumes.2376842/ My very expensive Lacie 6big got corrupted, and the replacement is also acting up. Seagate and Apple are blaming each other, they made me to reformat my boot drive and completely reinstall MacOS, which did not solved the issue.

-

I was given an IBM X3650 M3. I am unable to get any operating system to work on it. I am now just trying to get windows 10 on it. I know that this is stupid and not using the machine to its full potential and thats ok. I get into the windows 10 installer but it does not recognize my drive. The sas backplane is hooked up to an LSI raid card. I have read that I need to load the drivers to a usb. When I do this and select the flash drive under load drivers in installation, it is unable to find any drivers. Please help. What am I doing wrong?

- 18 replies

-

- windows

- installation

-

(and 2 more)

Tagged with:

-

Hi I have a Gigabyte GA-Z77X-UD4H(Windows 7). What happend was i have set up Intel Rapid storage technology long time ago! IT was running fine for a long time until today there was an error when i was virus scanning(what i do every month) i wake up to see a BSOD and intel rapid storage technology was the culprit. i uninstalled it. but i cannot get my bios to set to AHCI mode again if i did the windows loading screen would crash so if it were set on RAID it works fine. I looked at boot options my HDD was not detected nor the SSD(which i use only for the intel rapid storage technology) Only things it was detecting was the optical drive and some sata driver which seems to be on of my HDD but not really i dunno why. If i load up in raid i have no problem loading in to everything. but its just that i am not running on raid at all and it has a slow boot. I have removed all raid volumes uninstalled storage drivers and reinstalled them. reset CMOS and took out the battery and even unplugging and re plugging the sata cables but still it would not let go of RAID so im stuck with it instead of AHCI which now i want. I think my SSD died because in windows its not detecting only my HDD drives. computer runs fine so far... just i dont like it stuck on raid when there is none thats setup. i even uninstalled Intel rapid storage technology and got the latest drivers for everything in my motherboard. i think because its my SSD that died but i dont understand why it the bios would not detect the other HDD drives. Windows it showing it but bios is not. Please help me fix this problem and thanks!

-

Today I decided to update my MSI motherboard (z170 Krait Gaming) to it's latest BIOS version. I used MSI's Live Update software to do so, and it seemed to go perfectly. The only snag was that I attempted to boot in the default AHCI mode to start, before remembering to set it back to the RAID mode. Unfortunately, though my system now boots off my SSD just fine, my main storage volume made up of 2 HDD's in Raid 0 has failed to show up. The BIOS RAID screen shows the raid as "failed" with 1 disk registering as a member and the other showing up as non-raid. Could my attempt to boot in AHCI have caused this? Is there a way that I can go about figuring out if my raid is corrupted or if there is a way to recover the raid or recover the data? I'm kinda freaking out over here (It's literally ALL my data) and while I know that RAID 0 isn't the safest thing, I guess I was looking out for drive failure rather than BIOS updates as being the possible death of it. Thanks for any help in advance! PS: I have made every effort to not send any read or write requests to the (inaccessible) drive that does show up in my OS. EDIT: FIX THAT WORKED FOR ME: http://www.overclock.net/t/478557/howto-recover-intel-raid-non-member-disk-error

-

I wish to setup a RAID array within my main computer for storing audio (band recordings). I want to do it internally to the computer I use and not on a NAS because I don't have the money for high speed networking. I'm not sure what is the best option to achieve this between windows storage spaces, the RAID mode for storage devices on my motherboard, or a dedicated RAID card. I would like to do RAID 5 with 3 SATA HDD's. My boot drive is a single separate NVME SSD.

-

Hello! I end up in bit of struggle, where my old PC MB broke, and on that PC I had two SSD:s setup in RAID0 on Intel Rapid Storage Tech tool from BIOS (old MB was ASUS Maximus VI Hero), and new when I went and replaced CPU, MB and Memories with new i3-12100F, I'm able to see the RAID volume in BIOS, but not able to boot in OS which is on another disk. As can be seen from pictures, if Intel Rapid Storage Tech is enabled, BIOS does find "Intel Games RAID", but isn't able to pick up boot partition from Kingston disk. If I disable Rapid Storage Tech, then windows boot partition can be found from Kingston disk, but again, Intel Games RAID isn't detected. Might there be some setting that I'm just missing, for BIOS to handle those two non-raid disks as normal SATA Disks, and two raid disks as RAID? Specs for PC: ASUS Prime B760M-K D4 Intel Core i3-12100F Some G.Skill DDR4 Thanks for any help!

-

Okay, so I'm new to this page, but I've been a tech enthusiast for many years. I know a base amount about how to build computers and how everything works. But one thing I admit is that I am not an expert, but I have big ideas. But anyways to my questions... I really want to build a new computer that fully supports ECC. I know it's one of those things that really doesn't matter to some, but I'm looking to go for a computer that is built to last, and also is going to have a simple RAID set-up, and an external firewall in addition to the one in the software. I am trying to do something well maybe is a bit insane. So please I ask ppl to be open minded. I need to know how I can modify a pc to run 2 separate raid set-ups that doesn't necessarily need to be able to function at the same time. See I'll be running 2 different operating systems, windows vista & the other is optional maybe windows 10 or 11. Most likely 10. I'll switch between hard drives in the bios with the bios set to run at start up. And I want a back up hard drive set up for each OS as the RAID. And, because I'll be running an older OS I want to add ECC support to help with any errors caused by outdated software, drivers, or whatever. I know I'll get a lot of responses about why in the sam hill I need this kind of set up, well, lets just say I'm married and my wife is quite attached to an old game her siblings used to play a long time ago. Anyways, moving on lol. Due to the older OS that's why I am also adding an additional firewall hardware on the network since it's likely Windows vista isn't updated with the latest protection anymore. As far performance in hardware I'm not necessarily looking for high end, but something in-between, not too hot but not sub-standard either. I did a little research already and I guess AMD still has boards that still enable ECC, and that most or maybe all intel ones don't anymore. My preference is at least 10 cores for the processor with a base clock of at least 3.2 GHz. And at least 64 GB of memory. I'll be using this pc for a lot of moderate and occasionally heavy processing operations, mostly on the windows 10 OS. So first things that come to my mind is does all the hardware need to be ECC compatible? I would assume so, like the CPU, motherboard, ram, and I wonder about if ECC would be necessary for the GPU?... probably not idk u tell me. I'll only be using a basic GPU not for gaming primarily, but more for a modest touch in capability, and streaming. What should I do to prepare to put together a rig like this? Any Adaptors? Drivers? Reformatting? Details beyond the normal standard knowledge of making a pc is what I'm looking for. Even if you don't have the complete list of answers if you at least know one piece of the puzzle it's appreciated. I know what I am looking for I just need some guidance on how I may implement my ideas here. I know how to build a basic computer, but just not with any of these additional crazy stuff lol. I'm open to anything that'll make this happen, even if it's out of the box thinking.

- 5 replies

-

- ecc

- compatibility

-

(and 2 more)

Tagged with:

-

My cousin found a Dell R410 Power Edge in the trash (University dumpster dive). He gave it to me because I like to tinker. I got it working and it worked great with the original drives, but I want to use larger 4TB SAS drives, in four bays, for total of 16TB. Unfortunately, the Dell Raid set up only sees each drive as 2TB. Any thoughts on what I'm doing wrong? Thanks!

-

Howdy! I'm looking to build a proxmox server and my plan is to use a 1tb m.2 for running the VMs on and then configure some hard drives for storing the data on my NAS. What is the best way to do this? I hear a lot about different raid configurations, and I have no idea how I need to set mine up because there's so many different options. I'm not looking to keep it up and running 24/7 and if it goes down because one drive failed that's fine. All I'm looking to is have one backup drive in case one fails and I cannot recover the data. Any recommendations? And thank you for your assistance!

-

Hi there fellow g33ks, I run a fairly small homelab, which mostly run on dell servers running vmware for virtualization purposes. Recently there was a power outage, which is normally no big deal, but this time the ups didnt last long enough, and the shutdown events failed to be send to the hypervisor. So long story short... 1 of the 3 raids in my dell r730 is lost. Some information: Server: Dell r730 Controller: H730P Disks: 4 x SAS SSD 12 Gbps (directly from DELL) Raid: raid10 After the power came back, 4 of the disks was flashing orange, and when powering on the server, it was not showing the "Press F to import foreing configuration". So I entered the controller (ctrl + r) and it found no virtual disks for this pool, and when I go to disk overview, it seems like all 4 disks are failed, which I think is very wierd. I know that poweroutages are horrible, but to loose all 4 ssds seems unlikely. I also tried to see the information via idrac, which tells me basically the same information, that all 4 disks are DEAD. What I've tried is: 1) Reboot the server 2) Reseat the drives 3) Clear any cache on the controller 4) Plug the disks into another server with the same controller (same model - but different controller) Ps. I have a backup of mostly all of it, but there was some dev projects that did not get backuped in time, so that would be lost. It's not the end of the world, but would be nice to have it, and get 4 rather expensive ssds to work again. Any help is appreciated.

-

Hi there! I have an ASUS z170 DELUXE motherboard and today I decided to set up 2x3TB WD Blue HDDs in Raid1 mode for reliability (after my single 3TB started giving errors). The thing is, when I setup them in Raid0 (in the BIOS), the read and write performance almost doubles, but in Raid1 mode there is no difference... Am I doing something wrong here? Thanks in advance!

-

Hi I currently have a Samsung 950 PRO 256GB SSD that I used as a boot drive for over 8 years. I recently got the Samsung 970 EVO Plus 1TB SSD to upgrade it. However in my system, I have a pair of Corsair Force LS 480GB SSDs I run in software RAID 0 within Windows. I would like to know, If my software RAID would get affected after the cloning process. Because both my M.2 slots are already in used, would using an M.2 SSD enclosure be an issue. I am doing to cloning on the boot system itself, are the any implications for that. Clonezilla seems like it's the best tool for the job but it looks very tedious and since im only going to do this once, can I get a recommendation for an alternative that is FREE and easy to use.

-

I had two HDDs in a RAID 1 and had a sudden power cut, after which Windows stopped detecting the partition. I checked in the BIOS, and both drives seem to be healthy, they both show up and their SMART status doesn't show any errors. When I go into the array properties however, the status is "Failed", and the array doesn't show any drives associated with it, and likewise, the drives' properties don't show any associated arrays. How do I troubleshoot this? As far as I can tell, the data might be fine, and it's only the array that got corrupted, but I couldn't find any articles about recovering from something like this, and obviously I'm afraid of trying anything on my own, I don't want to lose any data. I'd appreciate some help.

-

Budget (including currency): $50? Country: United States Games, programs or workloads that it will be used for: everything Other details (existing parts lists, whether any peripherals are needed, what you're upgrading from, when you're going to buy, what resolution and refresh rate you want to play at, etc): n/a Currently working on upgrading from 7th gen to 13th gen Intel, I have everything planned out and I think it looks super clean (founders edition 4070 looks a tad out of place and small lol) So, I have this Asus M.2 PCIE 4th gen adapter, and it matches the board nice, except for the bare black PCB on the back side, under my GPU. Imo, it looks very bland. I wanted to know if anyone had any opinions on it, I was looking into if it would be safe to paint the PCB white, with safety precautions for the connections on the back, or if maybe I could aquire a brushed aluminum backplate to match everything else? Let me know what you think. (Side note. I use the adapter to make a M.2 raid 0 array from used 256gb drives that are in good running condition at work (I work as on site IT support))

-

I'm pretty new to NAS but I have a decent understanding of how it works... I think... My plan was to slap together some hardware for a multi-purpose home server which would include running my website, a NAS for personal use, and perhaps some minor game servers for myself and some friends (Link for anyone curious about the build). I can run most of what I need off of the boot SSD, but the NAS part needs a bit more storage, so I was planning to run 4 8TB disks in RAID 5, but want to mitigate some of the read/write losses from using spinning storage by using a pair of SATA SSDs in RAID 0. Is this even possible with any free software available on Ubuntu Server?

-

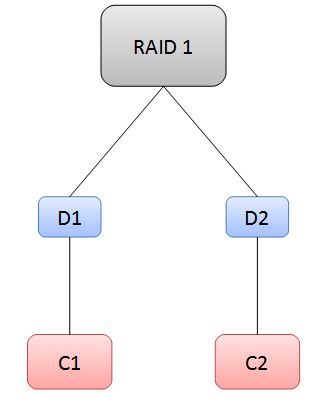

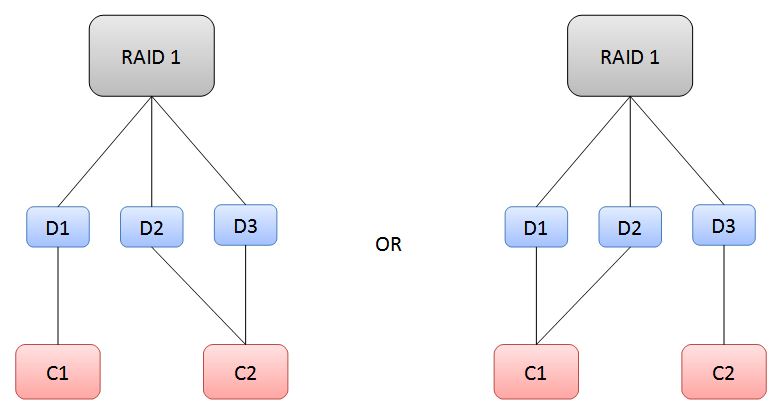

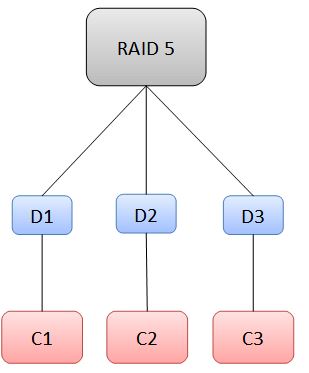

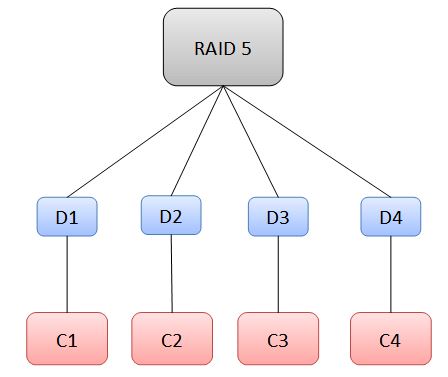

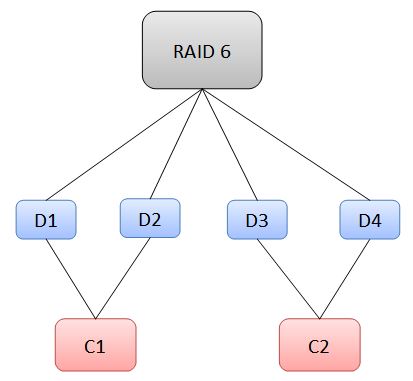

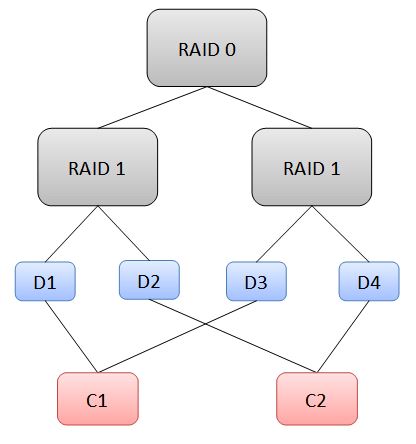

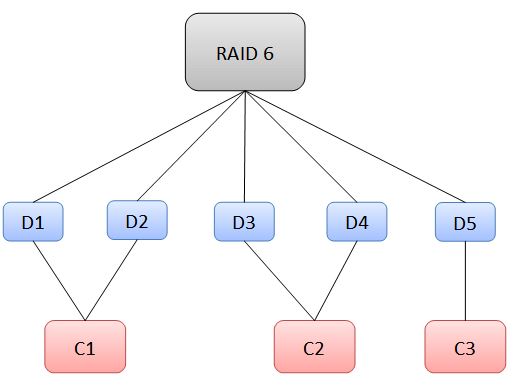

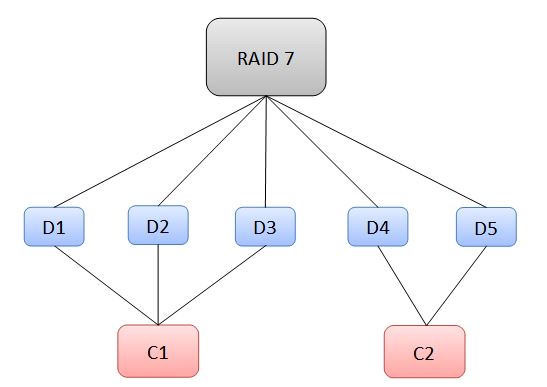

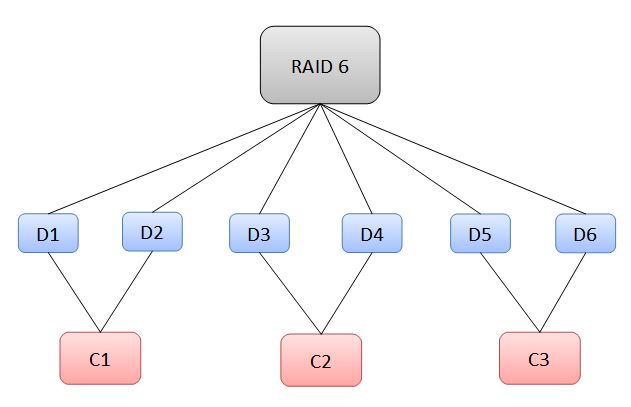

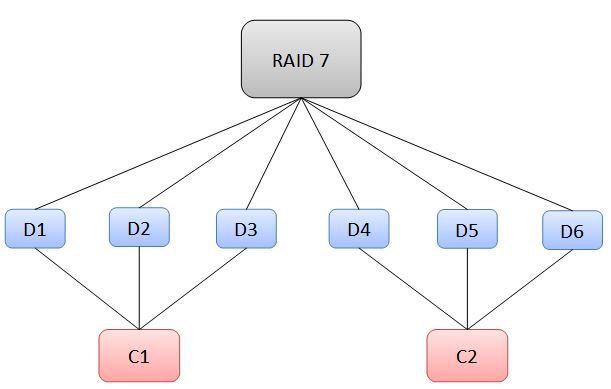

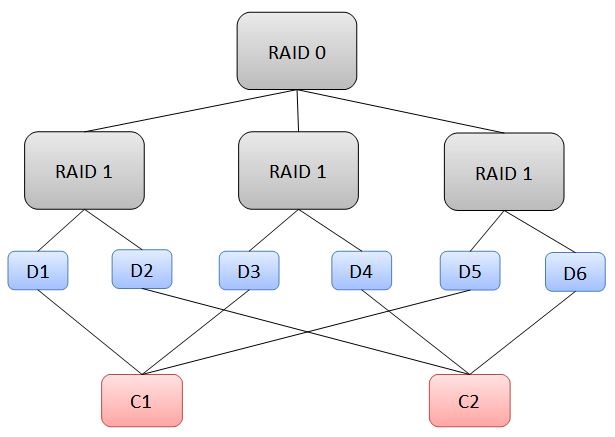

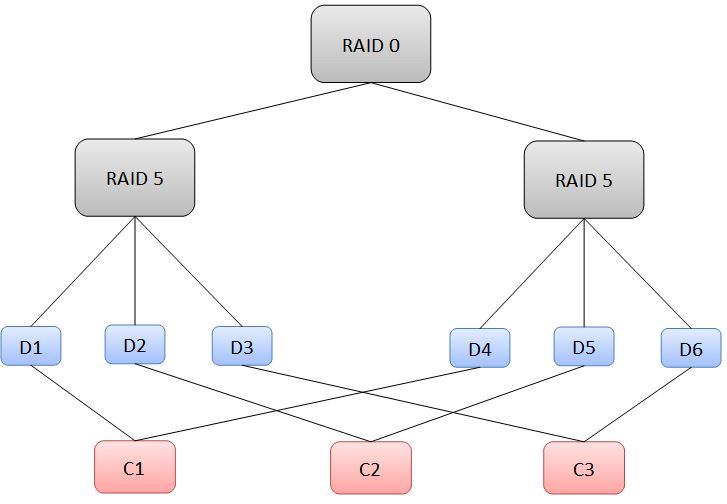

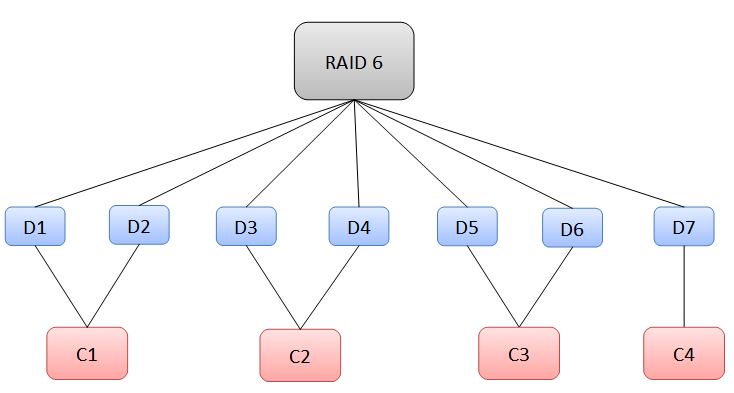

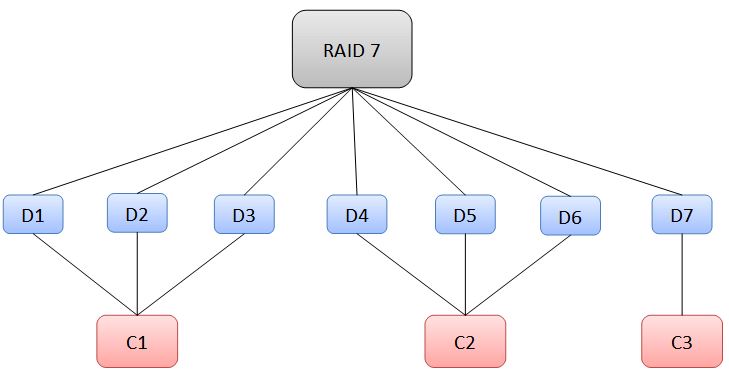

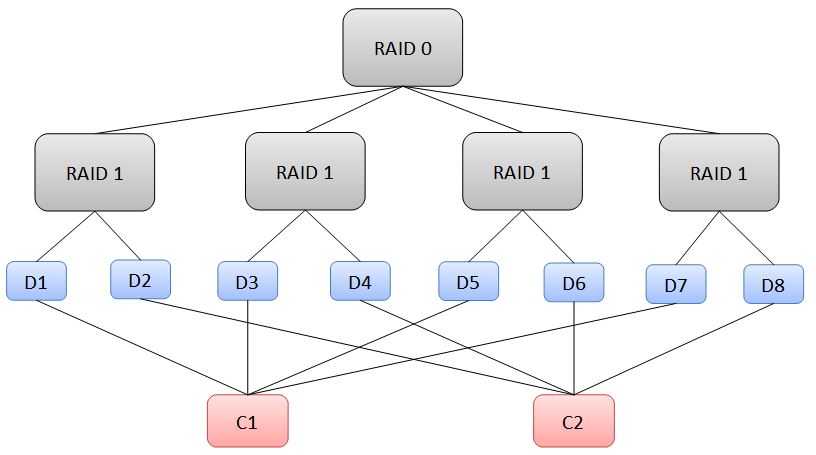

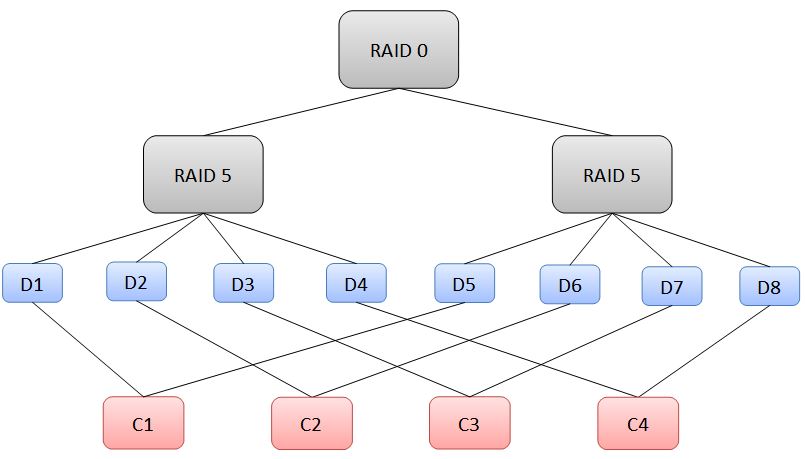

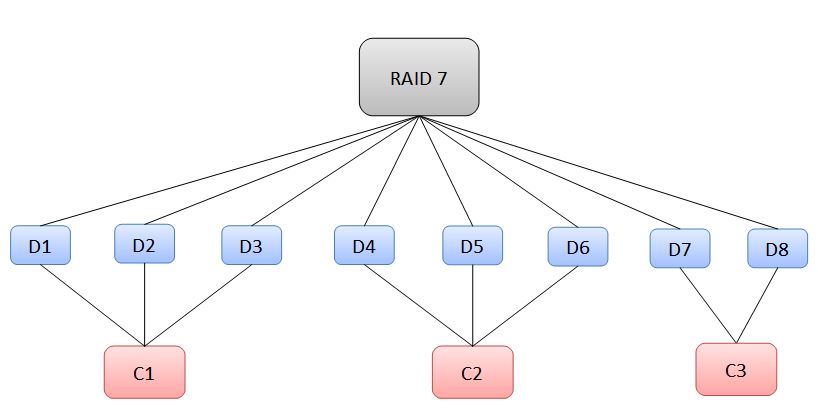

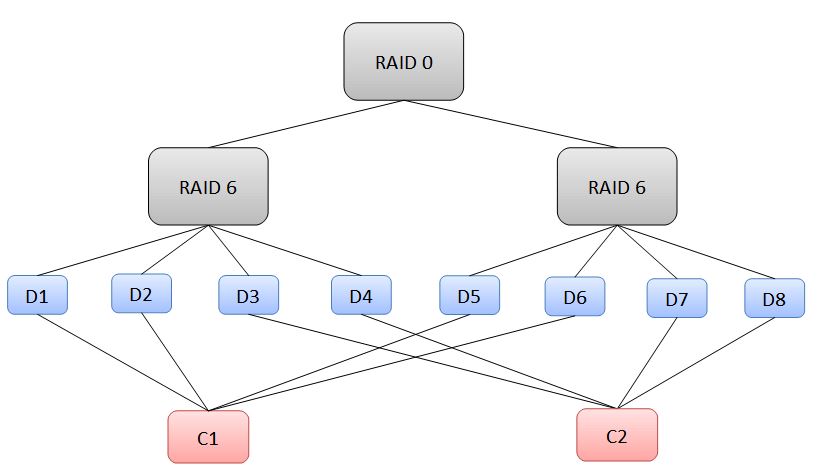

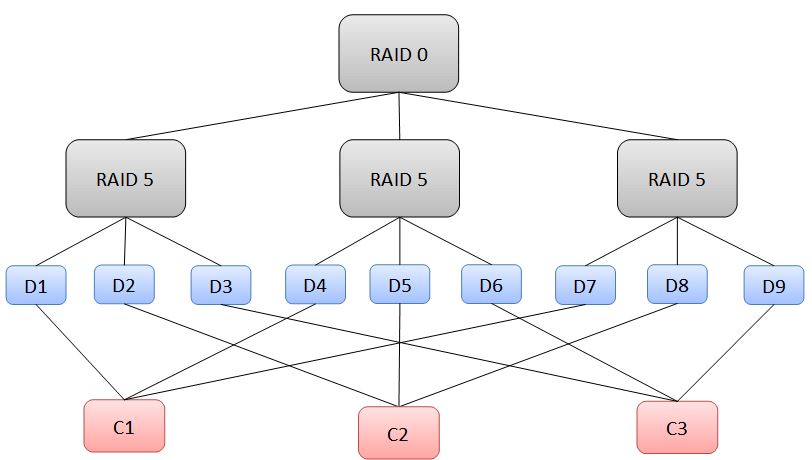

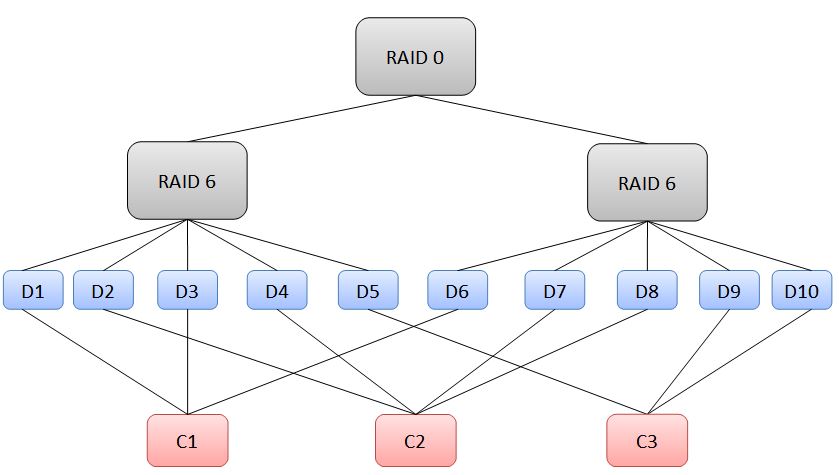

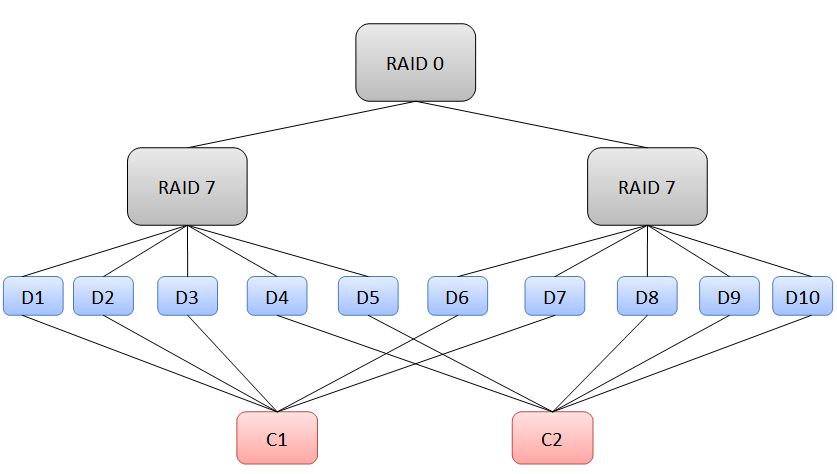

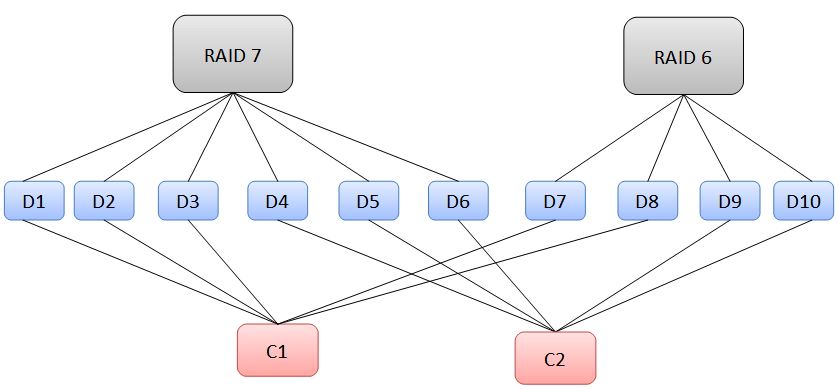

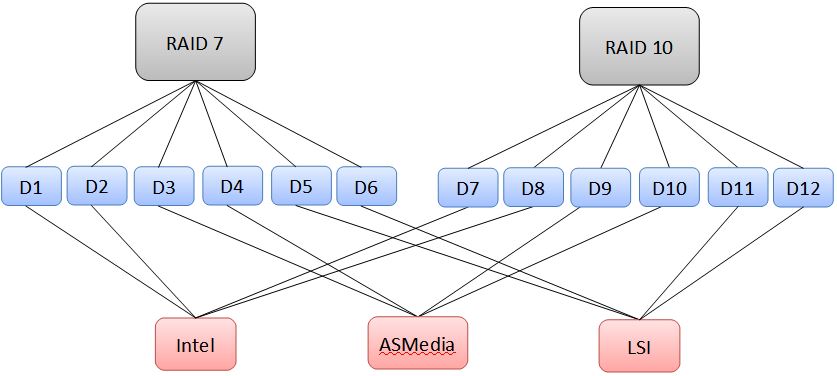

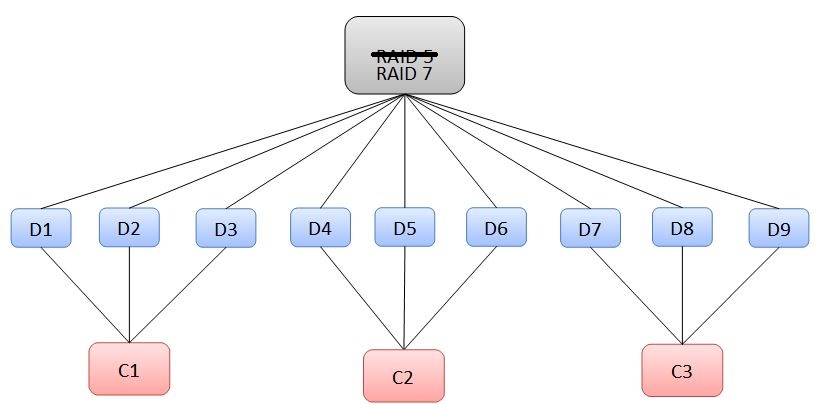

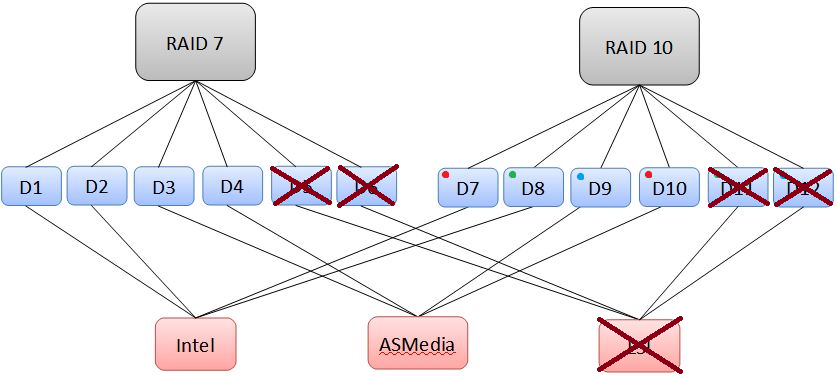

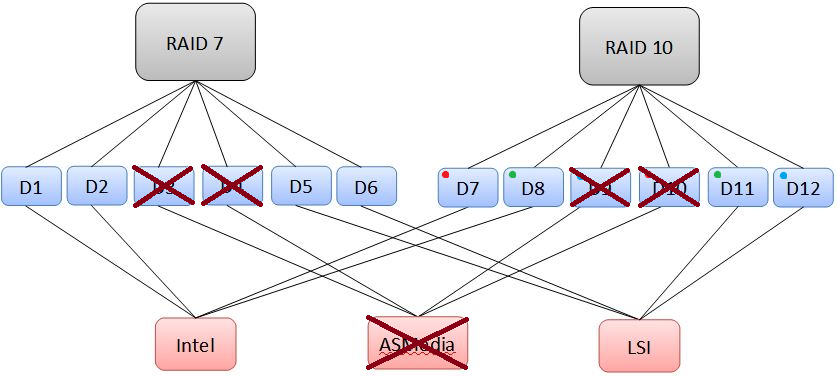

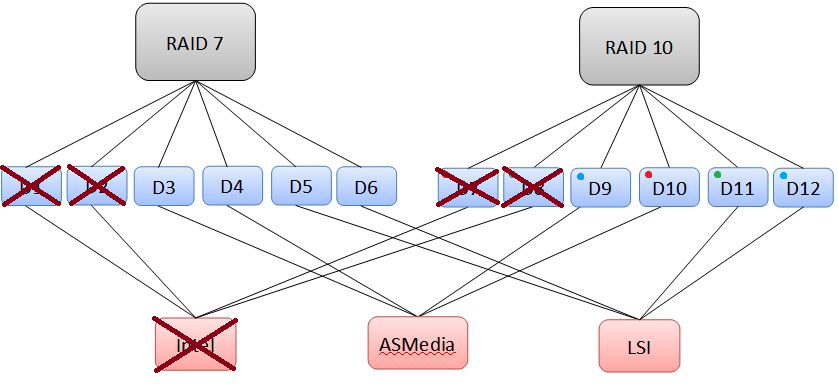

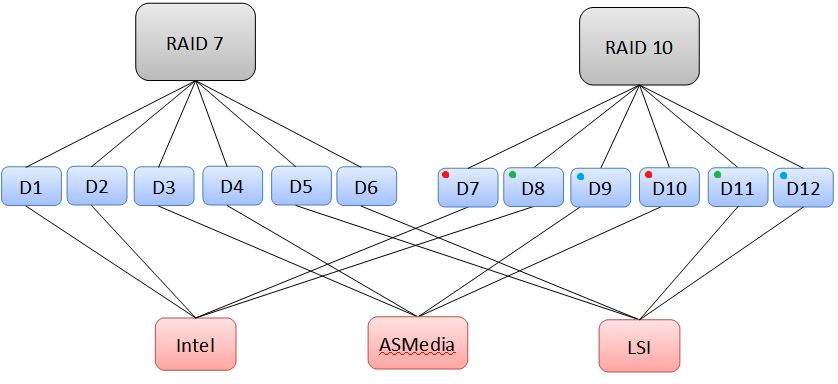

Reducing Single Points of Failure in Redundant Storage In lots of the storage builds that exist here on the forum, the primary method of data protection is RAID, sometimes coupled with a backup solution (or the storage build is the backup solution). In the storage industry, there are load of systems that utilize RAID to provide redundancy for customers' data. One key aspect of (good) storage solutions is being resistant to not only drive failures (which happen a LOT), but also failure of other components as well. The goal is to have no single point of failure. First, let's ask: What is a Single Point of Failure? A single point of failure is exactly what it sounds like. Pick a component inside of the storage system, and imagine that it was broken, removed, etc. Do you lose any data as a result of this? If so, then that component is a single point of failure. By the way, from this point forward a single point of failure will be abbreviated as: SPoF Let's pick on looney again, using his system given here. Looney's build contains a FlexRAID software RAID array, which is comprised of drives on two separate hardware RAID cards running as Host Bus Adapters, with a handful of iSCSI targeted drives. We'll just focus on the RAID arrays for now, since those seem like where he would store data he wants to protect. His two arrays are on two separate hardware RAID cards, which provide redundancy in case of possible drive failures. As long as he replaces drives as they fail, his array is unlikely to go down. Now let's be mean and remove his IBM card, effectively removing 8 of his 14 drives. Since he only has two drives worth of parity, is his system still online? No, we have exceeded the capability of his RAID array to recover from drive loss. If he only had this system, that makes his IBM card a SPoF, as well as his RocketRaid card. However, he has a cloud backup service, which is very reliable in terms of keeping data intact. In addition, being from the Kingdom of the Netherlands, he has fantastic 100/100 internet service, making the process of recovering from a total system loss much easier. See why RAID doesn't constitute backup? It doesn't protect you from a catastrophic event. In professional environments, lots of storage is done over specialized networks, where multiple systems can replicate data to keep it safe in the event of a single system loss. In addition, systems may have multiple storage controllers (not like RAID controllers) which allow a single system to keep operating in the event of a controller failure. These systems also run RAID to prevent against drive loss. In systems running the Z File System (ZFS) like FreeNAS or Ubuntu with ZFS installed, DIY users can eliminate SPoFs by using multiple storage controllers, and planning their volumes to reduce the risk of data loss. Something similar can be done (I believe) with FlexRAID. This article aims to provide examples for theoretical configurations, and will have some practical real-life examples as well. It also will outline the high (sometimes unreasonably high) cost of eliminating SPoF for certain configurations, and aim to identify more efficient and practical ones. Please note: There is no hardware RAID control going on here, all software RAID. When 'controllers' are mentioned, I am referring to the Intel/3rd party SATA chipsets on a motherboard, an add-in SATA controller (Host Bus Adapter), or an add-in RAID card running without RAID configured. The controllers only provide the computer with more SATA ports, and it is the software itself which controls the RAID array. First, lets start with hypothetical situations. We have a user with some drives who wants to eliminate SPoFs in his system. Since we can't remove the risk of a catastrophic failure (such as a CPU, motherboard or RAM failure), we'll ignore those for now. We can, however, reduce the risk of downtime due to a controller failure. This might be a 3rd party chipset, a RAID card (not configured for RAID) or other HBA which connects drives to the system. RAID 0 will not be considered, since there is no redundancy. Note: For clarification, RAID 5 represents single-parity RAID, or RAID Z1 (ZFS). RAID 6 represents dual-parity RAID, or RAID Z2. RAID 7 represents triple-parity RAID, or RAID Z3. Note: FlexRAID doesn't support nested RAID levels. [spoiler=Our user has two drives.] Given this, the only viable configuration is RAID 1. In a typical situation, we might hook both drives up to the same controller and call it a day. But now that controller is a SPoF! To get around this, we'll use two controllers, and set up the configuration as shown: Now, if we remove a controller, there is still an active drive that keeps the data alive! This system has removed the controllers as a SPoF. [spoiler=Our user has three drives.] With three drives, we can do either a 3-way RAID 1 mirror, or a RAID 5 configuration. Let's start with RAID 1: Remembering that we want to have at least 2 controllers, we can set up the RAID 1 in one of two ways, shown below: In this instance, we could lose any controller, and the array would still be alive. Now let's go to RAID 5: In RAID 5, a loss of more than 1 drive will kill the array. Therefore, there must be at least 3 controllers to prevent any one from becoming an SPoF, shown below: Notice that in this situation, we are using a lot of controllers given the number of drives we have. Note also that the more drives a RAID 5 contains, the more controllers we will need. We'll see this shortly. [spoiler=Our user has four drives.] We'll stop using RAID 1 at this point, since it is very costly to keep building the array. This time, our options are RAID 5, RAID 6 and RAID 10. We'll start with RAID 5, for the last time. Remembering the insight we developed last time, we'll need 4 controller for 4 drives: This really starts to get expensive, unless you are already using 4 controllers in your system (we'll talk about this during the practical examples later on). Now on to RAID 6: Since RAID 6 can sustain two drive losses, we can put two drives on each controller, so we need 2 controllers to meet our requirements: In this situation, the loss of a controller will down two drives, which the array can endure. Last is RAID 10: Using RAID 10 with four drives gives us this minimum configuration: Notice that for RAID 10, we can put one drive from each RAID 1 stripe on a single controller. As we'll see later on, this allows us to create massive RAID 10 arrays with a relatively small number of controllers. In addition, using RAID 10 gives us the same storage space as a RAID 6, but with smaller worst-case redundancy. Given four drives, the best choices look like RAID 6and RAID 10, with the trade-off being redundancy (RAID 6is better) versus speed (RAID 10 is better). [spoiler=Our user has five drives.] For this case, we can't go with RAID 5, since it would require 5 controllers, and can't do RAID 10 with an odd number of drives. However, we do have RAID 6 and RAID 7. We'll start with RAID 6: Here we need at least 3 controllers, but one controller is underutilized: For RAID 7, we get 3 drives worth of redundancy, so we can put 3 drives on each controller: In this case, we need two controllers, with one being underutilized. [spoiler=Our user has six drives.] We can now start doing some more advanced nested RAID levels. In this case, we can create RAID 10, RAID 6, RAID 7, and RAID 50 (striped RAID 5). RAID 10 follows the logical progression from the four drive configuration: RAID 6 becomes as efficient as possible, fully utilizing all controllers: RAID 7 also becomes as efficient as possible, fully utilizing both controllers: RAID 50 is possible by creating two RAID 5volumes and striping them together as a RAID 0: Notice that we have reduced the number of controllers for a single-parity solution, since we can put one drive from each stripe onto a single controller. This progression will occur later as well, when we start looking at RAID 60 and RAID 70. We can obviously do a RAID 10, but now we can also venture into the realm of RAID 70 (striped RAID 7), in addition to RAID 60. Our RAID 60 is slightly underutilized: Finally, we have our RAID 70: Our RAID 70 is relatively inefficient (using 12 drives would be better, with six drives per controller). However, it allows for increased performance in addition to providing a huge amount of redundancy for this setup. This is definitely not recommended for anything other than mission-critical data or perhaps priceless memories (wedding video/photos, important docs, etc.) In this case, we can do either a RAID 50 or RAID 7, both of which require 3 controllers: For RAID 50, we have 3 stripes of RAID 5: For RAID 7, we have 3 controllers which are all fully utilized: Both configurations give us 6 drives worth of space for 3 controllers, with the tradeoff, once again, being worst-case redundancy (RAID 7 is better) versus speed (RAID 50 is better). For configurations with more drives, we aren't going to look at RAID 7, since we'll require more controllers. With eight drives, we can jump into the realm of RAID 60 (striped RAID 6 volumes). We also have the options of RAID 10, RAID 50, and RAID 7. RAID 10 looks just like it did before: RAID 50 looks similar, but now we need more controllers since each volume has more drives: RAID 7 looks pretty similar too: RAID 60 looks pretty efficient with this number of drives. Logically progressing from RAID 50, we can have two drives per stripe on one controller, for 4 drives per controller. This lets us use only two controllers: Like we had for four drives, we now have a tradeoff between worst-case redundancy and speed between RAID 60 and RAID 10.

.thumb.jpg.f9ae8dd06c32264995de7dbe5286f1bb.jpg)

.thumb.jpg.9d405893094096c0a7473cbf1ec42d27.jpg)